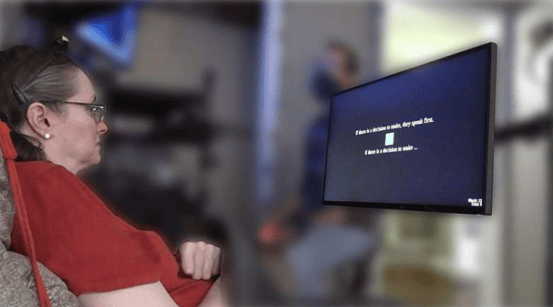

A research team from Stanford University in the United States has published a major breakthrough in the journal Cell, successfully decoding brain activity related to inner speech for the first time, with an accuracy rate of 74%. This breakthrough opens new possibilities for applying brain-computer interface technology to patients with language impairments.

The team implanted microelectrodes into the motor cortex of four patients with amyotrophic lateral sclerosis (ALS) and recorded neural signals during their attempts to speak and imagine speaking. Lead author Erin Kunz stated: "This is the first time we have captured brain activity patterns during silent inner monologue." The experiments showed that the two language modes activate similar brain regions, but with differences in intensity.

The artificial intelligence model developed by the team can recognize imagined sentences from a vocabulary of 125,000 words. Co-first author Benyamin Meschede-Krasa noted: "Thinking about speaking is much less effortful than actually vocalizing, which is especially important for patients with severe paralysis." The study also implemented a password control mechanism, where specific phrases can activate the decoding system, achieving recognition accuracy exceeding 98%.

Senior author Frank Willett said: "While current technology cannot yet decode free-form inner speech, this research lays the foundation for future natural and fluent thought-based communication." The team plans to further optimize the algorithms and increase the number of sensors to improve system performance.