Scientists at the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) have developed a revolutionary new robot control system called the "Neural Jacobian Field" (NJF), which enables precise robot control using only visual data, breaking free from the limitations of traditional robots that rely on complex sensors and hand-crafted models. The research results have been published in the journal Nature.

Traditional robots are typically designed with rigid structures and equipped with abundant sensors to build accurate digital twin models for control. However, as robotics advances toward flexible and bio-inspired designs, these methods become increasingly difficult to apply to soft robots and irregularly shaped machines. The NJF system overturns this paradigm by allowing robots to learn an internal model simply by observing their own movements, thereby achieving self-control.

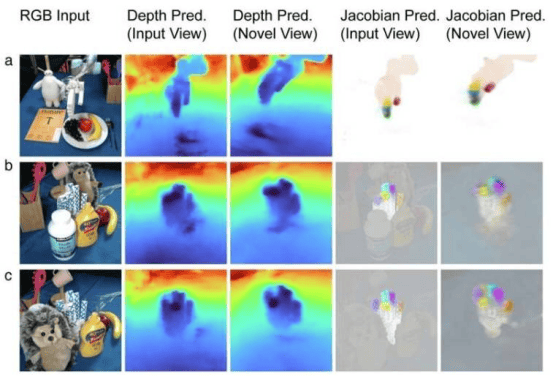

At the core of the NJF system is a neural network that integrates Neural Radiance Field (NeRF) technology. It not only learns the robot's 3D geometry but also learns the Jacobian field—a function that predicts how any point on the robot's body moves in response to control commands. By having the robot perform random motions while multiple cameras record the results, the NJF system can infer the relationship between control signals and motion without any human supervision or prior knowledge of the robot's structure.

The research team tested the NJF system on various types of robots, including pneumatic soft grippers, rigid Allegro hands, 3D-printed robotic arms, and even a rotating platform without embedded sensors. In every case, the system learned the robot's shape and its response to control signals solely through vision and random motions, enabling real-time closed-loop control with centimeter-level positioning accuracy.

Sizhe Lester Li, a PhD student in Electrical Engineering and Computer Science at MIT and researcher affiliated with CSAIL, stated: "This work marks a shift in robotics from programming to teaching. In the future, we envision showing a robot a task and letting it autonomously learn how to achieve the goal—just as naturally as a human discovers which buttons to press on a new device."

The emergence of the NJF system brings unprecedented freedom to robot design. Designers can now explore unconventional, unconstrained robot morphologies without worrying about the complexity of modeling or control. Moreover, the system lowers the barriers to robotics technology, making affordable, highly adaptable robots feasible.

Vincent Sitzmann, Assistant Professor at MIT and leader of the Scene Representation Group, noted: "Vision is a flexible and reliable sensor that opens the door for robots to operate in messy, unstructured environments—such as farms, construction sites, and more—without requiring expensive infrastructure."

Although the current NJF system requires retraining for each new robot and lacks force or tactile sensing, the researchers are actively exploring new approaches to overcome these limitations. They envision a future where hobbyists can simply record random actions of a robot using a smartphone, then use those videos to create a control model with no prior knowledge or specialized equipment.

"Just as humans develop an intuitive understanding of how their bodies move and respond to commands, NJF enables robots to gain this embodied self-awareness solely through vision," Li said. "This understanding lays the foundation for flexible manipulation and control in real-world environments, reflecting a broader trend in robotics from manual programming toward teaching robots through observation and interaction."