Large language models (LLMs) capable of simulating human speech are gradually becoming a new tool for social science research. They enable low-cost testing of hypotheses and pilot studies, and have already achieved early positive results. Social science research helps enterprises with marketing, policymakers with decision-making, and public health strategy planning by improving the understanding of human behavior. However, traditional social science research faces challenges of being time-consuming, expensive, and difficult to replicate due to the complexity of its research subjects — humans.

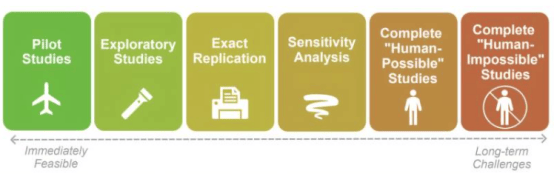

With advances in artificial intelligence, LLMs can simulate professional social scientists or diverse human subjects, enabling low-cost hypothesis testing and preliminary research. Jacy Anthis, a visiting scholar at the Stanford Institute for Human-Centered Artificial Intelligence (HAI) and a PhD student at the University of Chicago, pointed out that although LLMs show potential in simulation, their answers often lack diversity, carry bias, or are overly flattering, and their ability to generalize to new environments is limited. Nevertheless, Anthis remains optimistic about the application of LLMs in social science research, believing that one year of in-depth research could bring substantial progress.

To evaluate the ability of LLMs to mimic humans, a team led by Luke Hewitt, a senior researcher at Stanford's Polarization and Conflict Lab (PACS), compared LLMs with randomized controlled trials (RCTs) and found that LLMs' prediction accuracy in simulating human responses to random treatments was comparable to that of human experts and highly correlated with treatment effects. However, Hewitt also noted that new models are harder to audit due to their web search capabilities and may require unpublished research archives for evaluation. At the same time, LLMs face challenges with distributional consistency, meaning they cannot fully match the diversity of human answers. Stanford University electrical engineering graduate student Nicole Meister stated that guiding LLMs with "few-shot" examples can improve the distribution of their answers, but this method has limited effect on preference questions. In addition, using LLMs also faces challenges such as validation, bias, sycophancy, unfamiliarity, and generalization. Anthis believes that although these challenges exist, they can be mitigated through specific techniques, such as interview-based simulations and model fine-tuning.

Currently, hybrid methods have become the best practice for using LLMs in social science research. Stanford University sociology graduate student David Broska proposed a hybrid research design that combines human responses with LLM predictions. Through prediction-driven reasoning, it effectively utilizes both data resources while avoiding bias introduced by LLMs. Hewitt stated that LLM simulations have already played a role in experimental design and questionnaire wording selection, but human data remains the cornerstone of social science research.