When a hundred-ton autonomous mining truck shuttles at high speed through an open-pit mine, it needs to precisely maintain a safe distance from dozens of target types, including other moving vehicles, maintenance personnel, and scattered rocks. However, open-pit mining sites are not conventional roads—complex and variable visual interference caused by low light, high dust levels, all-weather operations, and multi-scale targets in excavation areas is becoming the biggest "perception barrier" restricting the large-scale safe application of autonomous mining trucks.

Addressing this industry pain point, recently, Xi'an University of Science and Technology, in collaboration with the Institute of Automation, Chinese Academy of Sciences, and CASI Vision (Beijing) Technology Co., Ltd., published significant research findings in the internationally renowned journal Journal of Industrial and Mining Automation. The research team proposed a "lightweight multi-scale object detection model—an improved YOLOv11n model," which, through a series of algorithmic innovations, effectively enhances the perception accuracy and edge deployment efficiency of autonomous mining trucks under extreme operating conditions.

Extreme Operating Conditions, the "Perception Dilemma" of Traditional Models

The excavation site of an open-pit mine is a typical "visually challenging" environment: insufficient lighting deep within the pit, daytime dust filling the sky like a sandstorm, and the need for mining trucks to identify multi-scale targets ranging from nearby gravel and tunnels to distant vehicles and personnel within a driving range of hundreds of meters. Traditional object detection models either possess high accuracy with massive parameter counts, making them difficult to run on in-vehicle edge devices, or, after lightweight compression, frequently miss detections among simultaneously appearing multi-scale targets. This core issue has always been a key constraint within the mining industry in moving from "single-point autonomous driving" to "tens of thousands of kilometers of safe operation."

Breaking Shackles, Four Core Modules Illuminate the "Smart Eyes" of Mining Trucks

To solve the dual problems of decreased accuracy and parameter scale explosion caused by low light, high dust interference, and simultaneous recognition of multi-scale targets, the research team implanted four innovative modules into the standard YOLOv11n network structure, achieving a new balance between accuracy and deployment efficiency.

1. Mixed Token (MToken) Module: Overcoming Multi-Scale Feature Extraction Obstacles

The research team introduced MToken technology into the shallow C3k2 modules of the backbone network. Through parallel multi-dilation rate branch convolutions, it achieves fine feature extraction capabilities for targets of different sizes. When distant small vehicles and nearby massive excavation equipment coexist, the MToken module can simultaneously perform a more balanced visual representation of multiple objects with large size spans.

2. Multi-Lookup Table (MuLUT) Module: Enhancing Deep Semantic Discrimination Capability

In the deeper C3k2 modules, the team innovatively introduced a multi-lookup table structure to perform high-level semantic modeling and discrimination of multi-scale targets, further strengthening the recognition confidence of potentially dangerous targets in complex operating conditions such as steep slopes, occlusions, and partial overlaps.

3. Illumination-Enhanced Self-Attention (ILSA) Module: Endowing the Model with "Night Vision" Capability

Facing typical low-light and non-uniform illumination scenes deep within the pit, the research team designed a dedicated ILSA module. It directly performs global context encoding and local nonlinear enhancement within the feature map, significantly improving the model's feature expression quality under complex dark light conditions, freeing mining trucks from the "blind spots" in dim corners of the mining site.

4. E-PST Pyramid Sparse Transformer: Balancing Efficient Fusion and Lightweight Design

In the model's neck feature pyramid structure, the team proposed an Enhanced Pyramid Sparse Transformer (E-PST). Utilizing an adaptive Top-k selection strategy and cross-scale feature enhancement technology, it efficiently fuses multi-scale target features while significantly reducing computational redundancy, ensuring the model runs smoothly on end-side devices like in-vehicle chips.

Data Reveals True Strength: A Dual Breakthrough in Accuracy and Efficiency

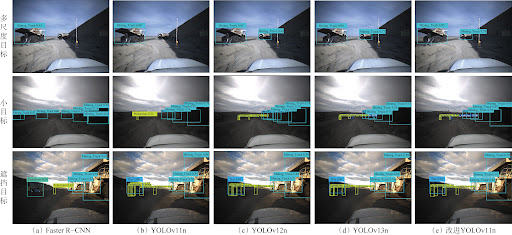

To verify the model's performance, the team constructed an open-pit mine autonomous driving evaluation benchmark primarily based on the Automine dataset. They conducted rigorous comparative tests between the improved model and traditional SSD, Faster R-CNN, as well as mainstream industry models like YOLOv11n, YOLOv12n, and YOLOv13n.

Significant Accuracy Improvement: Compared to the baseline YOLOv11n model, the new model's mAP@0.5 increased by 3.7%, and mAP@0.5-0.95 increased by 5.6%, achieving an accuracy leap within a lightweight structure.

Notable Model Slimming: The model's parameter count, computational load, and model size were reduced by 26.7%, 30.2%, and 21.8% respectively, with the parameter count dropping to less than two-thirds of conventional detection models.

Efficient Edge Deployment: The improved model was deployed on a Jeston AGX Xavier edge device for actual testing. The inference speed stably reached 27.6 frames/s, the final model size was only 2.673 MiB, and the recognition of personnel and vehicles was accurate and stable, fully meeting the fast and compact perception computing needs of mining trucks.

Lightweight Models Drive a New Course for Smart Mines

The core value of this research lies in providing an engineering pathway of "lightweight, high-precision, and easy deployment" for the environmental perception of autonomous driving in open-pit mines. This improved model possesses the triple advantages of a high recognition rate, low parameter count, and low computational power consumption, making it particularly suitable for AI decision-making systems that need to be directly deployed on mining vehicle chips. Globally, autonomous driving in open-pit mines is gradually moving from technical pilots to large-scale commercial operation. With the explosive demand for high-precision, all-weather perception in China's mining industry, this lightweight multi-scale object detection model can not only be used for the autonomous perception of unmanned mining trucks but can also be extended to multiple scenarios such as autonomous mine inspection drones and on-site safety monitoring, significantly reducing the incidence of mine accidents and improving the operational efficiency of the entire mining and transportation chain.