en.Wedoany.com Reported - Despite continuous breakthroughs in technical capabilities, large language models have long been plagued by a structural flaw: a lack of cross-conversation contextual memory and a reliable data retrieval framework, leading to inconsistent and untrustworthy outputs. On May 7, 2026, at the MongoDB.local London 2026 conference held in London, U.S.-based NoSQL database provider MongoDB announced the integration of core AI capabilities—including persistent memory, retrieval, embedding, and reranking—into its Atlas data platform, aiming to systematically solve this problem from the data layer.

DimensionNet reports, despite continuous breakthroughs in technical capabilities, large language models have long been plagued by a structural flaw: a lack of cross-conversation contextual memory and a reliable data retrieval framework, leading to inconsistent and untrustworthy outputs. On May 7, 2026, at the MongoDB.local London 2026 conference held in London, U.S.-based NoSQL database provider MongoDB announced the integration of core AI capabilities—including persistent memory, retrieval, embedding, and reranking—into its Atlas data platform, aiming to systematically solve this problem from the data layer.

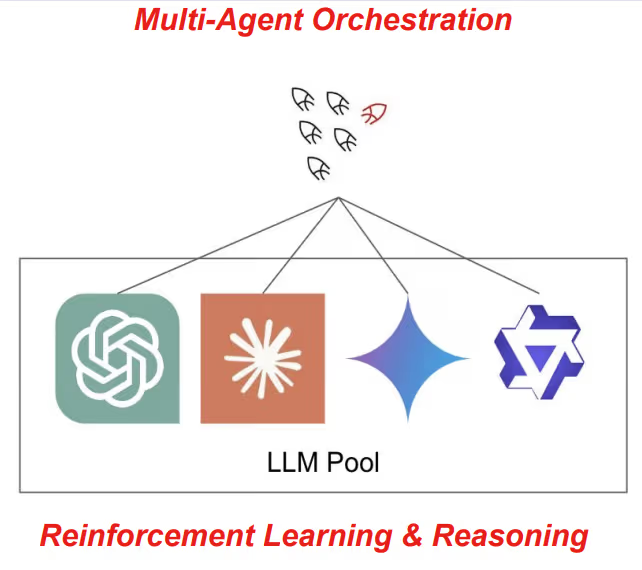

MongoDB Artificial Intelligence Field CTO Pete Johnson gave a direct judgment on agent memory at the launch: "Unlocking the power of agents requires memory. Just like human memory, a good agent memory organizes knowledge." He pointed out that the key to agentic AI lies in understanding context, and the model itself is not the limiting factor—"When AI tools and agents give wrong answers, people instinctively blame the model, but in reality, the data platform is the key to giving agents the correct context and memory."

One of the core updates in this release is the new Automated Voyage AI Embeddings feature in MongoDB Vector Search, now in public preview. This feature automatically generates embedding vectors when data is written or updated, compressing data search infrastructure setup that previously took weeks into minutes. MongoDB Chief Product Officer Pablo Stern noted that the bottleneck in enterprise AI production is not the model, but the data layer that supports agents in obtaining reliable context and persistent memory, "Developers no longer need to build and maintain data infrastructure themselves, manually connect to embedding models, or manage synchronization between systems."

Retrieval accuracy is a prerequisite for agent reliability. Voyage AI's embedding model ranks first in retrieval embedding benchmarks, meaning agents can find the correct information more accurately. Johnson explained that when most users face agent output errors, their instinct is to upgrade to a larger, more expensive model, but the real crux lies in retrieval: models can only make judgments based on the information given; if the data is inaccurate, outdated, or lacks context, the output will inevitably be wrong.

LangGraph.js Long-Term Memory Storage is now generally available, marking a key extension of memory capabilities in the tech stack. JavaScript and TypeScript developers, previously limited to short-term or single-threaded content processing, can now equip agents with cross-conversation persistent memory on MongoDB Atlas. This means agents can remember user preferences and interaction history, and continuously learn and make better decisions based on this.

To ensure stable agent operation in production environments, MongoDB has also made synchronous improvements in hardware performance. The new MongoDB 8.3 is immediately available, offering up to 45% improvement in read performance, 35% improvement in write performance, 15% improvement in ACID transaction processing, and 30% improvement in complex operation processing compared to version 8.0.

Another pain point for enterprise agent deployment lies in environmental consistency and security. Currently, MongoDB Atlas supports operation across multiple cloud platforms including AWS, Google Cloud, and Microsoft Azure, while also supporting on-premises deployment and hybrid environments. Cross-region AWS PrivateLink connectivity is now officially available, ensuring that database traffic between MongoDB Atlas clusters in different AWS regions is always transmitted within the AWS private network.

From an industry perspective, MongoDB's move effectively integrates databases, vector search, embedding models, memory storage, and retrieval reranking into a unified data infrastructure, eliminating the need for developers to separately assemble operational databases, vector stores, and multiple data pipelines. This integration removes the latency and synchronization overhead caused by fragmented data architectures, helping enterprises advance agent applications from the demonstration stage to genuine production deployment. As data retrieval accuracy improves and operational complexity decreases, the consensus that "the final missing piece for enterprise AI applications has always been a trustworthy data layer" is driving the entire industry to re-examine the true distance between model showmanship and infrastructure maturity.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com