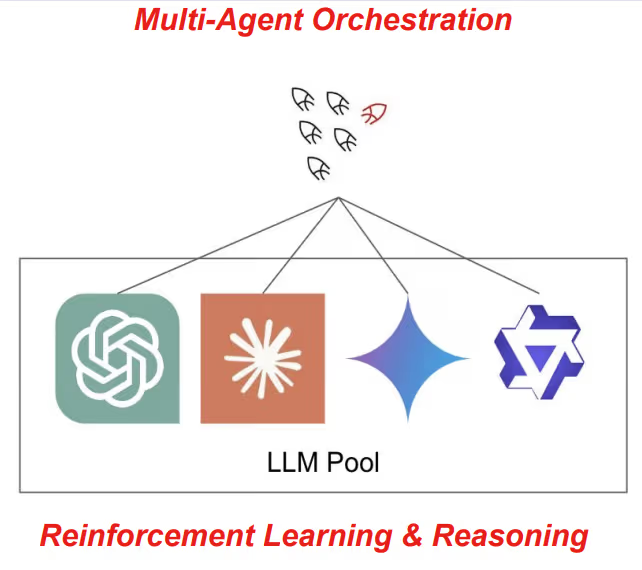

en.Wedoany.com Reported - Tokyo-based AI R&D company Sakana AI officially released RL Conductor on May 7, 2026. It is a small language model with only 7B parameters, trained via reinforcement learning to automatically orchestrate multiple large language models to collaboratively complete complex tasks. This achievement has been accepted by the top-tier machine learning conference ICLR 2026 and has been deployed commercially as the core technical foundation of Sakana Fugu, Sakana AI's multi-agent orchestration service platform.

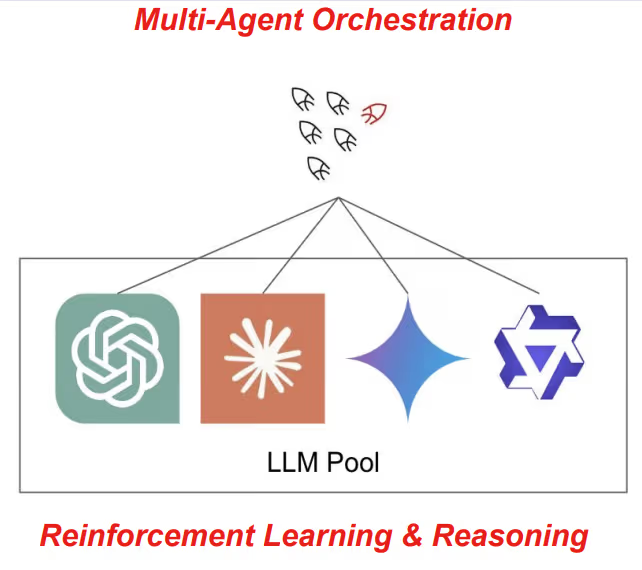

Yujin Tang, co-author of the RL Conductor paper, pointed out in an interview with VentureBeat that in production environments with large-scale heterogeneous demands, manually hardcoded agent pipelines encounter fundamental bottlenecks. In real-world scenarios, no single model is optimal for all tasks; different models have their own strengths in dimensions such as scientific reasoning, code generation, mathematical logic, or high-level planning. "Go beyond human-hardcoded designs" is precisely the design starting point for RL Conductor. Traditional manual orchestration solutions like LangChain or Mixture-of-Agents might be feasible in a single scenario, but once faced with large-scale heterogeneous demands, rigid rules become difficult to sustain. RL Conductor, through reinforcement learning, automatically discovers the optimal agent collaboration strategy end-to-end, training with a randomized pool of worker models, making it adaptable to any combination of open-source or closed-source models.

The core working mechanism of RL Conductor is: once a task is given, the model does not directly output the final answer but generates an agentic workflow in natural language form. This includes a sub-task description, the assigned target worker, and an access list determining which preceding results are visible at each step. These three components are parsed into Python lists of equal length. Training uses the GRPO algorithm with an extremely simple reward rule—0 points for format errors, 0.5 points for incorrect answers, and 1 point for completely correct answers, with no intermediate rewards or topological priors. The base model is Qwen2.5-7B, trained on only 960 questions over 200 iterations using 2 H100 GPUs.

After reinforcement learning training, RL Conductor gradually developed complex collaboration strategies: in LiveCodeBench programming tasks, it would have Gemini and Claude perform high-level planning first, then have GPT-5 write and optimize the code; for MMLU knowledge-based questions, it tends towards a concise 1-to-2-step process, while for programming questions, it unfolds a 3-to-4-step recursive verification process including a verifier. More crucially, in a recursive setting, it can observe the actual behavior of workers during testing and reallocate tasks from GPT-5 to Claude and Gemini, achieving dynamic error correction and task reallocation. This means RL Conductor does not simply route requests according to preset rules but can monitor worker output quality in real-time and dynamically adjust task allocation, forming a closed loop of adaptive correction.

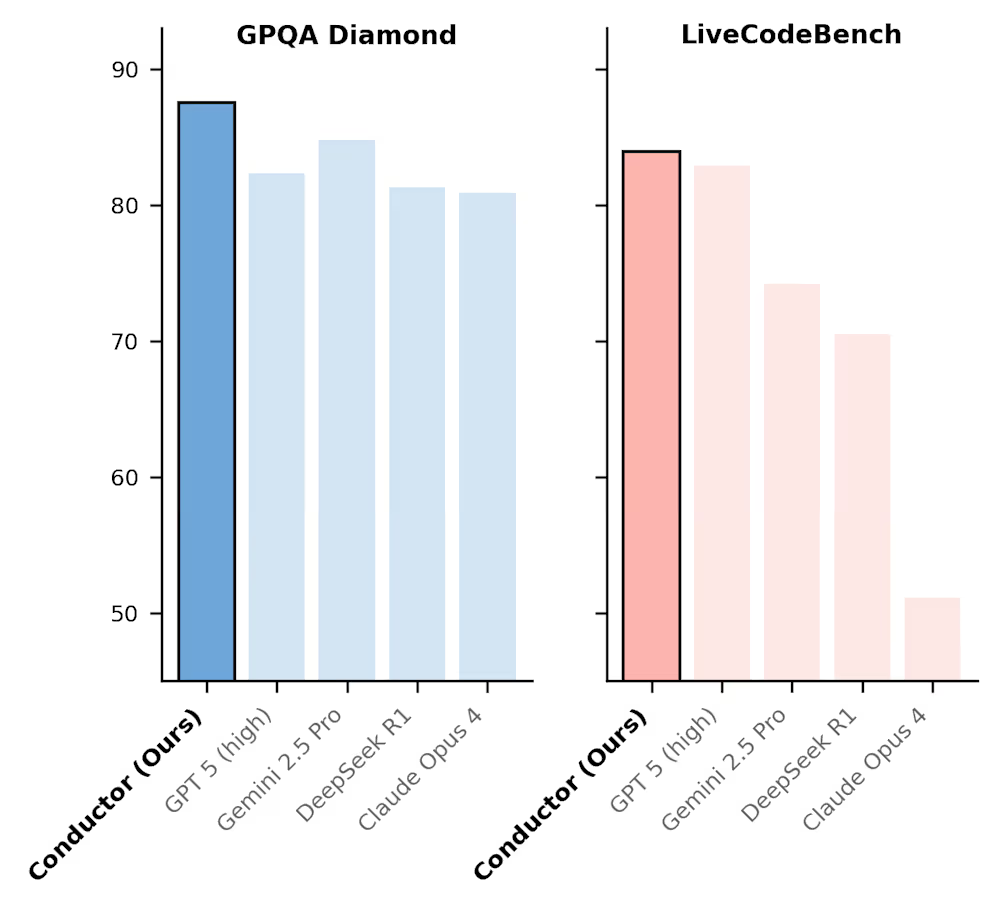

Performance is directly reflected in quantitative scores. RL Conductor achieved 87.5 on GPQA Diamond and 83.93 on LiveCodeBench, with an average score of 77.27, surpassing GPT-5's 74.78. In comparisons with single frontier models and expensive human-designed multi-agent solutions, RL Conductor reaches state-of-the-art levels with lower costs and fewer API calls. The model can also use itself as a worker for recursive topology expansion, achieving dynamic test-time scaling through online iterative adaptation, further converting inference computation into higher accuracy.

RL Conductor directly constitutes the technical foundation of Sakana AI's commercial product, Sakana Fugu. In April 2026, Sakana AI launched the Beta version of the Sakana Fugu multi-agent orchestration system, offering two configurations: Fugu Mini and Fugu Ultra. The former focuses on high-speed, low-latency orchestration, while the latter emphasizes full model pool utilization and deep, complex reasoning. Sakana AI is also incorporating another ICLR 2026 paper, the TRINITY coordinator, which manages a large model pool through the division of labor among three roles—Thinker, Worker, and Verifier—further enriching the multi-agent collaboration capabilities of the Fugu platform.

Sakana AI was founded in 2023, headquartered in Minato-ku, Tokyo, by former Google researchers David Ha (CEO), Llion Jones (CTO), and former Mercari manager Ren Ito (COO). The company name "Sakana" means "fish" in Japanese, symbolizing its core philosophy—just as a school of fish follows simple rules to form an orderly collective, AI should also emerge intelligence through nature-inspired mechanisms. In November 2025, the company completed approximately $135 million in Series B funding, reaching a post-money valuation of $2.65 billion, with investors including Mitsubishi UFJ Financial Group, Khosla Ventures, Macquarie Capital, NEA, Lux Capital, and In-Q-Tel. During the same period, the company expanded its engineering team hiring and accelerated commercial deployment in verticals such as finance, defense, and manufacturing.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com