en.Wedoany.com Reported - West Palm Beach, Florida, USA – May 6, 2026 (Local Time) – Vultr, the world's largest privately-held cloud infrastructure company, announced a strategic partnership with German open-source software company SUSE and American server and storage system manufacturer Supermicro. Together, they unveiled a unified Cloud-to-Edge AI architecture framework. This solution integrates high-performance hardware, localized cloud infrastructure, and a unified Kubernetes management system into a seamless business chain, addressing the challenges of latency, bandwidth costs, and operational consistency that enterprises face when deploying AI at data generation endpoints like factory floors and retail stores.

Kevin Cochrane, Chief Marketing Officer at Vultr, directly positioned the collaboration: "As AI enters its next phase, the next challenge is data sovereignty and geographic proximity. We combine global reach with regional GPU acceleration capabilities, helping enterprises ensure that sovereign infrastructure for processing data is already deployed, in place, and ready to scale wherever data is generated."

Rhys Oxenham, Vice President and General Manager of Artificial Intelligence at SUSE, identified scaled operations as the biggest obstacle for edge ecosystems: "Leveraging SUSE's composable, distributed hybrid infrastructure model, we are layering SUSE AI on top of SUSE Edge, providing the automation capabilities needed for deploying models, updates, and security policies. Together with our partners, we are building a truly distributed and manageable AI system for the modern enterprise."

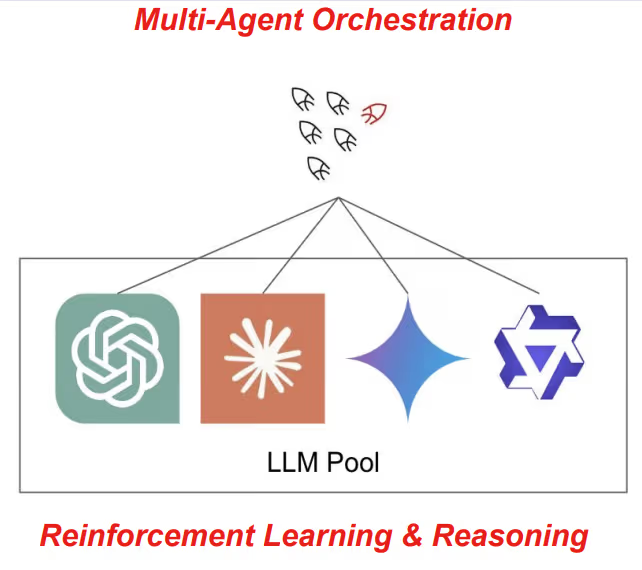

The solution divides the infrastructure into a three-layer collaborative architecture. The cloud and near-edge layer relies on Vultr's 33 cloud data center regions worldwide, allowing enterprises to deploy regional, Kubernetes-based AI clusters closer to users. Environments can be programmatically replicated and scaled via the Cluster API, calling on high-performance NVIDIA GPUs for inference computing when local edge capacity is insufficient. The metro-edge layer targets various edge environments requiring ultra-low latency and low power consumption. Combining validated solutions from SUSE Linux Enterprise Server and SUSE Kubernetes Engine (RKE2 and K3s), it processes real-time workloads like computer vision and sensor data processing directly at the data source. The control layer features SUSE Rancher Prime and Fleet, managing thousands of distributed sites through GitOps-driven workflows. Paired with SUSE AI, it ensures unified security policies, model updates, and configurations across the entire software stack from core data centers to edge devices; for industrial system scenarios, SUSE Industrial Edge supports private, on-premises deployment and deep integration with operational environments on this foundation.

Keith Basil, Vice President and General Manager of the Edge Business Unit at SUSE, further explained that when enterprises push intelligence to where data is generated, the edge is no longer a simple infrastructure layer but the operational system itself. SUSE Edge provides a consistent foundation between cloud and distributed environments, and SUSE Industrial Edge extends this model to on-site deployments. Combined with Vultr's cloud infrastructure and Supermicro's specialized hardware platforms, enterprises can transform insights into real-time action.

Supermicro emphasized that edge environments place extremely high demands on hardware's real-time resilience and thermal efficiency. Supermicro's edge servers and computing platforms excel in system-level design and energy efficiency engineering, capable of handling dense AI inference workloads without the infrastructure and cooling conditions of a traditional data center. Bill Wu, Vice President and General Manager of the Edge Business Unit at Supermicro, stated in the press release that the company's product portfolio can host high-performance AI workloads under constrained space, power, and cooling conditions, ensuring system-level consistency within the jointly validated ecosystem of Vultr and SUSE.

This collaboration is not the first time the three parties have joined forces. In April 2026, Vultr partnered with SUSE and Dell Technologies to launch an open AI Kubernetes stack for cloud, on-premises, and edge environments, integrating Vultr's cloud computing and GPU instances with SUSE Rancher Prime and SUSE AI on PowerEdge servers. The introduction of Supermicro as the hardware layer partner this time, replacing Dell, further expands the hardware compatibility landscape of this open AI infrastructure architecture. Vultr is also continuously advancing its AI infrastructure strategy, launching GPU and CPU cluster services for AI inference in January 2026, and already supporting next-generation GPU instances like the AMD MI300X.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com