Ahead of the Mobile World Congress Barcelona 2026, Nokia's Chief Technology and AI Officer, Pallavi Mahajan, outlined a vision to transform telecom networks into distributed AI infrastructure. She pointed out that deterministic latency, collaborative inference, and high-capacity optical interconnects are key to the success of physical AI systems. Using robots in dynamic environments as an example, Mahajan emphasized that future networks need to integrate wireless access, edge computing, core networks, IP routing, data center switching, and coherent optical transport into a unified architecture, rather than as separate, independent modules.

Mahajan focused on distributed inference, such as robots performing local visual processing while offloading models requiring millisecond-level latency to edge computing nodes. Fleet-level coordination is handled in central offices, while large-scale AI training and model updates are executed in distributed AI factories. This chain—including wireless access and slicing, core control, IP transport, data center architecture, and optical interconnects—must ensure deterministic end-to-end latency and reliability. Performance measurement shifts from peak throughput to cross-domain guarantees, with workloads automatically migrating between edge and cloud when latency exceeds thresholds.

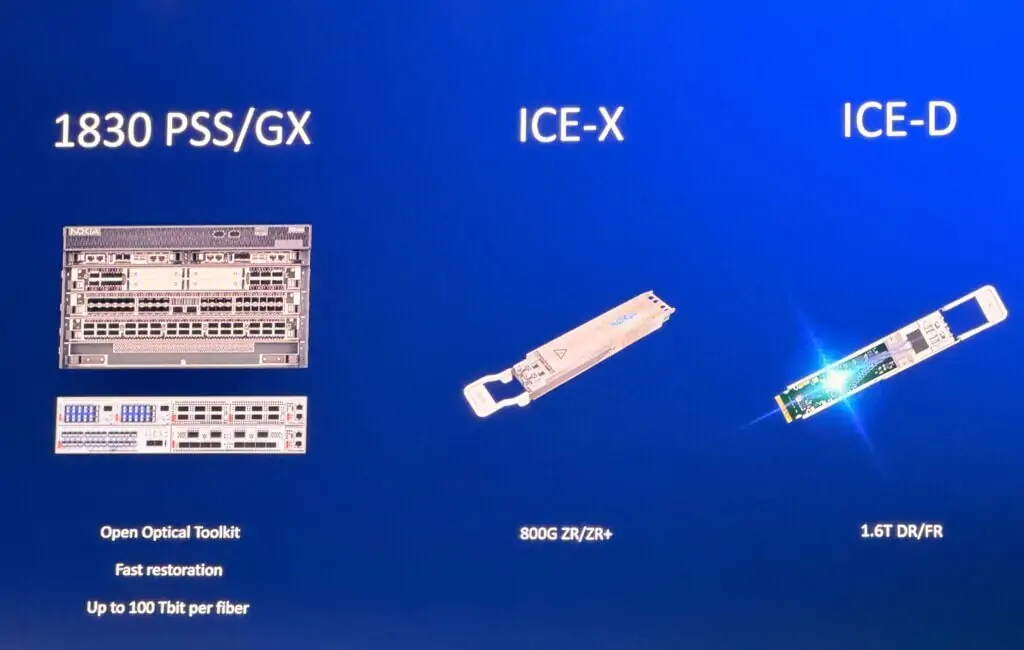

Nokia's strategy combines an AI-native software architecture with vertically integrated optical innovations. Mahajan mentioned that telecom infrastructure adopts the same AI data center chips used in hyperscale environments to achieve hardware-software decoupling and accelerate model iteration. Nokia also provides end-to-end optical control, from internal DSP and photonic integration to coherent pluggable modules, supporting data center interconnects at 1.2 Tbps per interface over long distances. Optical technology becomes the foundation for scaling distributed AI, facilitating low-latency, high-capacity connections between GPU clusters and cross-regional AI factories. Commercial AI-RAN trials are planned for 2026, targeting a launch in 2027.

Mahajan concluded, "The network is no longer just static connectivity for us. It is a distributed nervous system connecting intelligence." This deterministic AI network integrates GPU acceleration, optical transport, and wireless access, aiming to enhance the intelligence and efficiency of telecom systems.