en.Wedoany.com Report on Mar 26th, Google Research recently released the TurboQuant algorithm suite, a software breakthrough targeting the memory bottleneck of large language models. Through extreme key-value cache compression, this algorithm reduces model memory usage by an average of 6 times and boosts performance by 8 times when computing attention, potentially lowering operational costs by over 50% for enterprises. The related research paper has been made freely available and can be applied without the need for training.

TurboQuant is based on mathematical frameworks like PolarQuant and quantized Johnson-Lindenstrauss, effectively reducing quantization error through a two-stage process. In tests with models such as Llama-3.1-8B and Mistral-7B, the algorithm reduced memory footprint by at least 6 times while maintaining performance, and achieved an 8x speedup on hardware like the NVIDIA H100.

The community response has been enthusiastic. Technical analyst @Prince_Canuma tested the Qwen3.5-35B model in MLX, reporting that 2.5-bit TurboQuant reduced KV cache by nearly 5 times with zero accuracy loss. User @NoahEpstein_ pointed out that this algorithm narrows the gap between local AI and cloud services, enabling consumer-grade hardware to handle longer contexts.

On the market side, memory supplier stock prices have shown a downward trend, reflecting expectations that demand for high-bandwidth memory may ease. For enterprises, TurboQuant offers an immediate opportunity for improvement, allowing optimization of inference pipelines, expansion of context processing capabilities, and enhancement of local deployments without the need to retrain models.

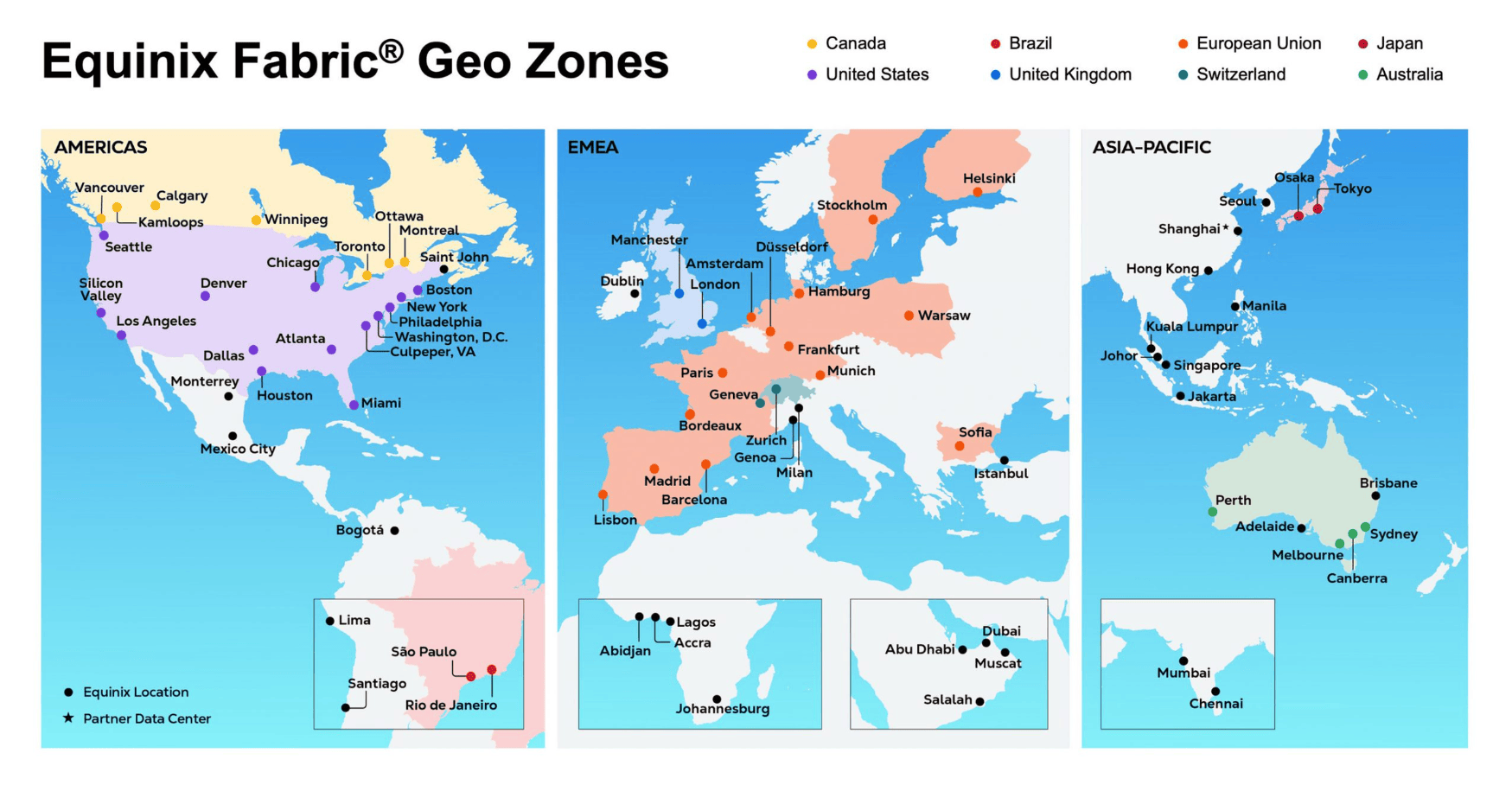

Google chose to release TurboQuant ahead of the ICLR 2026 conference in Rio de Janeiro, Brazil, and the AISTATS 2026 conference in Tangier, Morocco, marking a transition from academic theory to production application. This algorithm provides an efficient memory infrastructure for the agent AI era and may drive the industry towards "better memory."