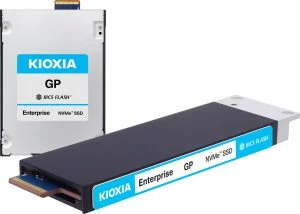

Wedoany.com Report on Mar 18th, Kioxia America, Inc. announced the development of the KIOXIA GP series ultra-high IOPS SSD, a new type of storage device specifically optimized for AI GPU workloads. This SSD enables GPUs to directly access high-speed flash memory, serving as an extension of High Bandwidth Memory (HBM) in AI systems. It is designed to meet the increasing performance demands of AI and high-performance computing, providing larger GPU-accessible memory capacity to accelerate data processing for AI workloads. Evaluation samples of the KIOXIA GP series are planned to be available to select customers by the end of 2026.

NVIDIA's Storage-Next initiative addresses the shift from compute-intensive to data-intensive workloads and the expanding need for GPU-accessible memory space. Extending the GPU's available memory space allows access to larger datasets and improves GPU utilization by moving more data closer to computational resources. The initiative calls on SSD vendors to design drives specifically optimized for GPU-initiated AI workloads, effectively extending HBM capacity by enabling GPU access to flash-based storage.

Kioxia supports NVIDIA's initiative with the KIOXIA GP series SSDs. This series leverages low-latency, high-performance KIOXIA XL-FLASH storage-class memory, offering unique advantages including higher IOPS, finer-grained data access (512 bytes), and lower power consumption per I/O compared to Kioxia's conventional TLC SSDs. Makoto Hamada, Senior Director of SSD Division at Kioxia, stated, "Kioxia fully supports NVIDIA's Storage-Next initiative and will deliver SSDs specifically built to meet GPU-accessible memory needs. This collaboration is crucial for shaping the future of AI storage architecture."

AI models are expanding to trillions of parameters, with context windows growing to millions of tokens, driving increased demand for KV cache. Architectures like NVIDIA's Context Memory Storage (CMX) require high-performance storage to extend the memory hierarchy beyond GPU memory. The KIOXIA CM9 series PCIe® 5.0 E3.S SSDs offer 25.6 TB TLC capacity and 3 DWPD endurance, supporting the performance, capacity, and durability required for large-scale inference environments. Kioxia believes this type of storage will play a key role in scaling efficient, cost-optimized AI inference infrastructure. Samples will begin shipping in the third quarter of 2026.

Kioxia will showcase the ultra-high IOPS SSD simulator and other technological innovations at the NVIDIA GTC exhibition (Booth 3522), reaffirming its commitment to advancing AI and high-performance computing technology through continuous innovation and strategic partnerships. The KIOXIA GP series SSD family is designed to meet the evolving demands of AI workloads.