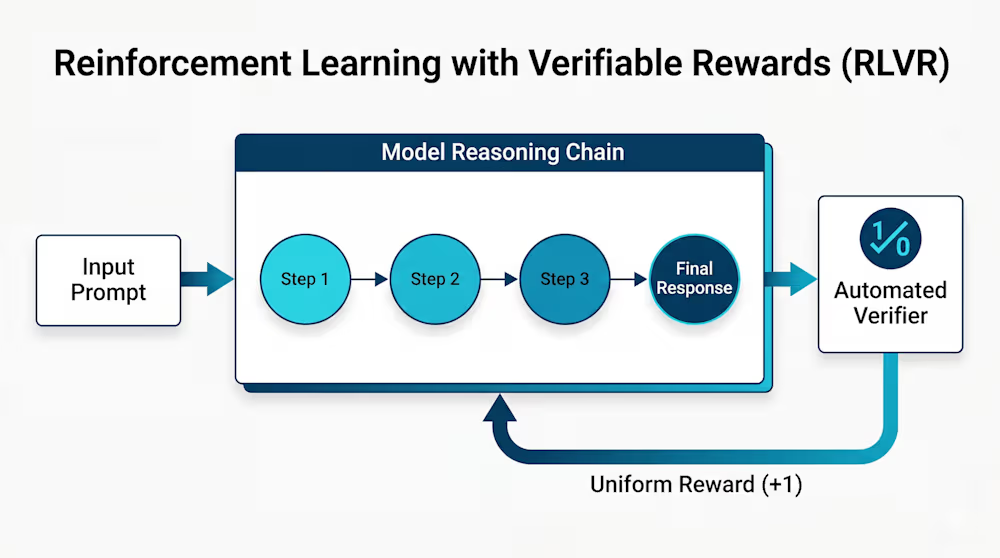

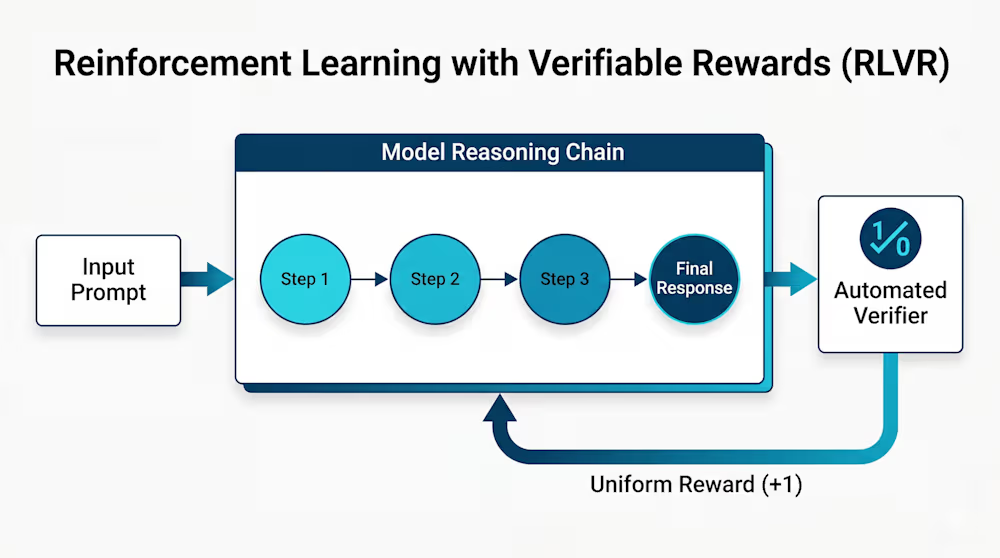

en.Wedoany.com Reported - The high cost of training AI reasoning models has long been a challenge for enterprise teams. Researchers from JD.com, in collaboration with multiple academic institutions, have proposed a new training paradigm called RLSD, designed to build custom reasoning agents with fewer computational resources. This technology combines reinforcement learning with self-distillation, addressing the problem of sparse signals or high computational overhead found in traditional methods.

In experiments, models trained with RLSD achieved an average accuracy of 56.18% on multiple visual reasoning benchmarks, surpassing both the base model and standard RLVR methods. According to Yang Chenxu, co-author of the paper, RLSD decouples the direction and magnitude of updates, using verifiable reward signals to determine the direction and achieving fine-grained, per-token feedback through self-distillation. This avoids information leakage issues and maintains training stability.

RLSD requires only one additional forward pass and converges approximately twice as fast as traditional methods. It is suitable for tasks with verifiable rewards, such as code compilation or mathematical verification, and can flexibly leverage privileged information. The technique can be lightly integrated into existing open-source frameworks, offering enterprises a new way to optimize models using internal data.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com