en.Wedoany.com Reported - Co-founded by renowned artificial intelligence researcher Richard Socher, AI startup Recursive Superintelligence officially ended its stealth mode on May 13, announcing the launch of research and development on recursive self-improving AI models. The company simultaneously announced the completion of $650 million in early-stage financing, with a post-money valuation reaching $4.65 billion. The round was co-led by GV (Google Ventures) and Greycroft, with participation from AMD Ventures and Nvidia.

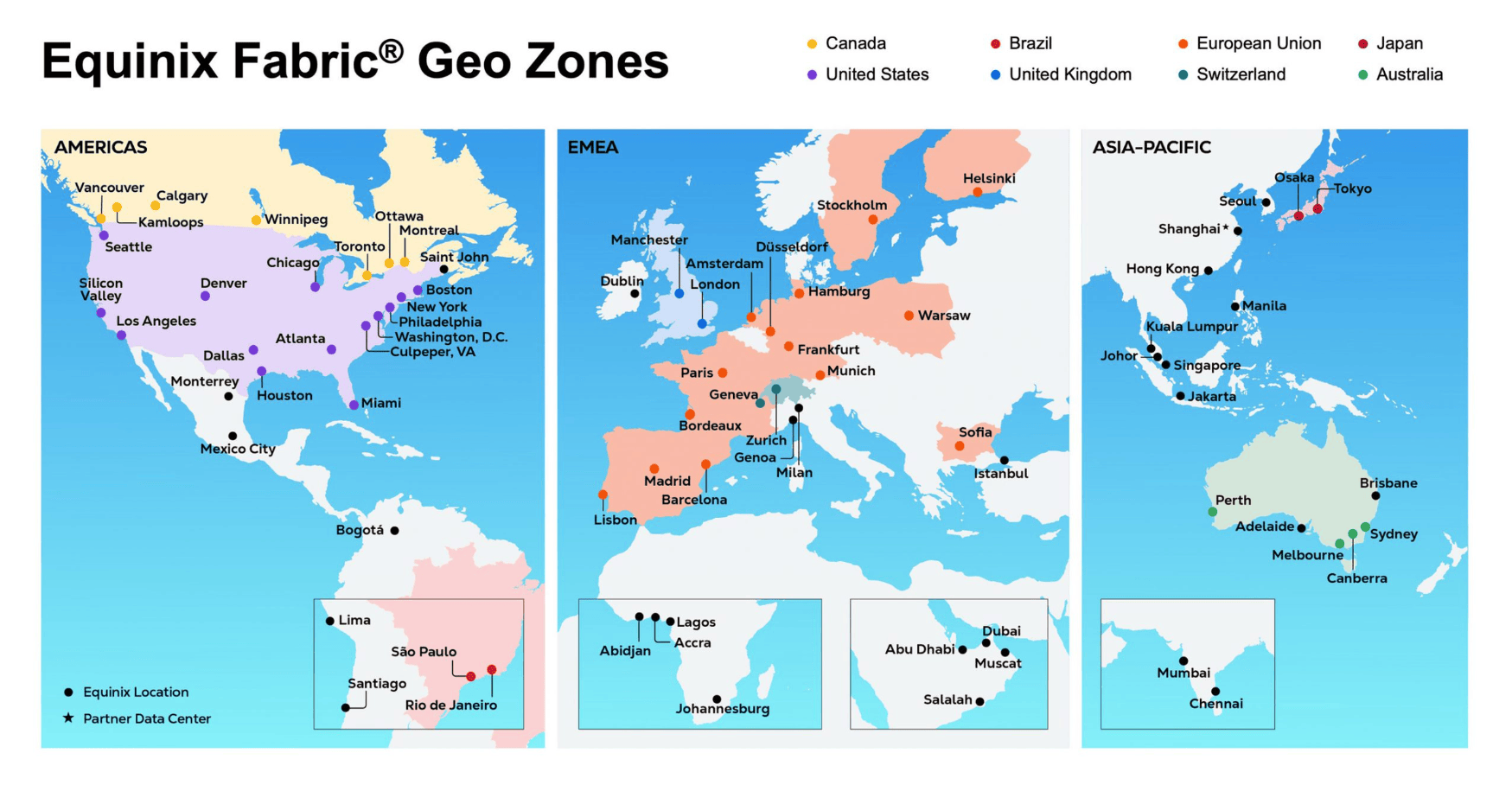

Recursive Superintelligence was co-founded by eight founders from the world's top AI labs. The team exceeds 25 people, with R&D teams distributed across San Francisco, USA, and London, UK. CEO Richard Socher, formerly the Chief Scientist at Salesforce, is one of the most highly cited researchers in the field of natural language processing, with over 240,000 citations on Google Scholar. Another co-founder, Yuandong Tian, previously worked at Meta FAIR, serving as a Research Scientist Director for nearly a decade, focusing on reinforcement learning and large model reasoning. The other six co-founders also hail from core institutions like OpenAI, Google DeepMind, and Salesforce AI, including Alexey Dosovitskiy, the first author of the Vision Transformer; Tim Rocktäschel, head of Open-Ended Intelligence at DeepMind; Jeff Clune, author of the Darwin-Godel machine; Josh Tobin, who built the OpenAI robotics team; Caiming Xiong, former Senior Vice President of Salesforce AI Research; and Tim Shi, an early OpenAI member and co-founder of the AI customer service unicorn Cresta. Peter Norvig, author of "Artificial Intelligence: A Modern Approach," serves as an advisor.

The company's technical approach diverges from the current mainstream large model path of stacking massive data and parameters, focusing instead on "Recursive Self-Improvement" (RSI). Specifically, Recursive aims to build an AI system capable of autonomously identifying its own weaknesses, designing fixes, and rewriting its own code, all without human intervention. Socher's core logical judgment on this is: "AI itself is code, and now AI can already write code." He defines this direction as the third stage of neural network development—following neural networks learning to automatically extract features, eliminating feature engineers, and unified models eliminating task-specific architectures, AI will learn to train itself, and this stage "may be the last one."

Recursive has unveiled a preliminary roadmap: the first step is to train a system with the capability of "50,000 PhDs," achieving full-process automation of AI scientific research, including evaluation, data filtering, training design, post-training alignment, and research direction selection. Once this closed loop starts operating, it will form a positive feedback loop of "AI improves itself → the improved AI is better at improving itself → the cycle continuously accelerates." The company expects to publicly release an autonomous training system codenamed "Level 1" by mid-2026. After validating AI improving AI, the platform will extend outward into scientific research fields such as drug discovery, battery materials, and fusion physics.

Observing the industry backdrop, Recursive's birth coincides with a structural wave where top global AI scientists are collectively leaving large firms and capital is concentrating bets on the recursive self-evolution track. David Silver founded Ineffable Intelligence, completing a $1.1 billion seed round at a $5.1 billion valuation; Yann LeCun's AMI Labs raised $1 billion; Ilya Sutskever's SSI is also at an undisclosed high valuation. Recursive joins this cohort with a $4.65 billion first-round valuation, achieved just four months after founding and without a public product, reflecting capital's clear willingness to bet on the recursive self-evolution technical path.

The participation of Nvidia and AMD as investors in this round carries significant strategic implications. The two chip manufacturers supply nearly all the hardware infrastructure for cutting-edge AI training globally. Investing in a company dedicated to automated AI R&D, whose logic demands exponentially growing computing power, suggests they view recursive self-improvement as a near-term source of computing power demand growth. The financing will be used to expand computing infrastructure and operations in San Francisco and London, accelerating the development of the automated scientific discovery platform and team expansion.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com