en.Wedoany.com Report on Mar 23rd, Data released by OpenRouter, the world's largest AI model API aggregation platform, shows that as of March 15, the weekly call volume of China's AI large models reached 4.69 trillion tokens, surpassing the United States for the second consecutive week and ranking first globally. In the single-model ranking, the top three positions for global call volume were all taken by Chinese models, demonstrating the strong momentum of China's AI industry in terms of application and deployment.

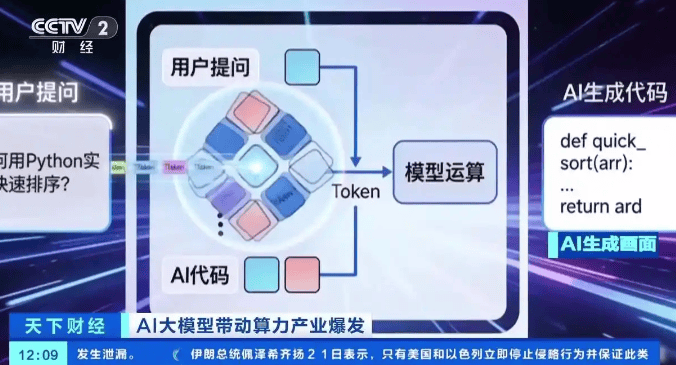

In the field of AI, a token is the smallest unit for measuring information processed by models. Whether it's a user's question or AI-generated code, text, or images, everything ultimately needs to be broken down into tokens for computation. Therefore, token call volume is regarded as a key metric for measuring the activity and industrial value of AI models—the higher the call volume, the more frequently the model is actually used and the greater the value it creates.

Coinciding with the release of this data, JPMorgan also provided a forward-looking forecast for China's AI computing power demand. The institution predicts that China's AI inference token consumption will grow from approximately 10 quadrillion in 2025 to about 3,900 quadrillion in 2030, representing an increase of about 370 times over five years. This growth rate reflects that, as AI large models deeply penetrate various industries, the demand for computing power during the inference phase is being released at a faster-than-expected pace.

Analysts point out that China's AI model call volume surpassing the United States for two consecutive weeks is not only due to the vast application scenarios and user base within the country but is also closely related to the continuous efforts of domestic models in building open-source ecosystems, developer communities, and commercial deployment. From general conversation to professional programming, and from content generation to Agent applications, Chinese models are achieving large-scale adoption across multiple dimensions.

As inference costs continue to decline and model capabilities improve, the high-speed growth in token consumption is expected to persist. This also signifies that AI is accelerating its transformation from a technological concept into a productivity tool, deeply embedding itself into the operation of the social economy.