en.Wedoany.com Reported - Broadcom and Meta announced a multi-year, multi-generation strategic collaboration on April 14, 2026 (local time) to support Meta's rapidly expanding artificial intelligence computing infrastructure. Building on the existing partnership, Broadcom will provide technical support for Meta's training and inference accelerator (MTIA) chips. The two parties plan to extend the collaboration until 2029, with this technology becoming the foundational core of Meta's deployment of cutting-edge AI data centers. The initial phase of the collaboration exceeds 1 gigawatt in scale, which Meta describes as "the first phase of an ongoing multi-gigawatt compute deployment."

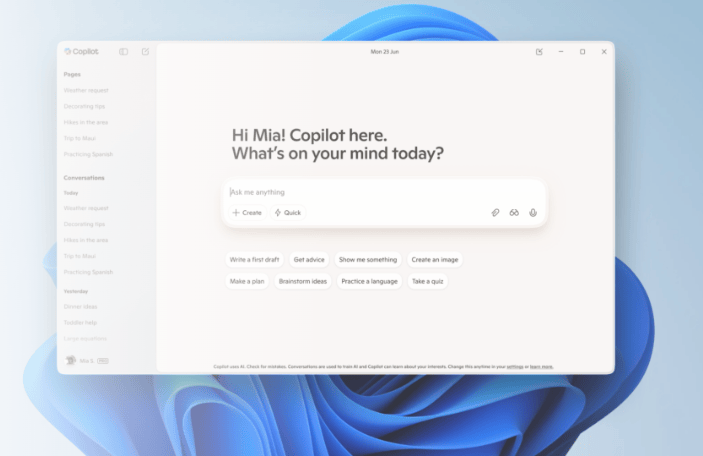

The MTIA chip is the core product of Meta's in-house AI processors. The first chip, MTIA 300, is already used in Meta's ranking and recommendation systems. Three additional chips are scheduled for release by 2027, with subsequent generations specifically optimized for inference tasks—the process where AI models respond to user queries. Meta unveiled the roadmap for four new chips last month, and this collaboration with Broadcom will accelerate the implementation of its custom chip strategy. Meta CEO Mark Zuckerberg stated that this partnership helps "build the massive compute foundation we need to deliver personal superintelligence to billions of people."

This collaboration leverages Broadcom's XPU custom acceleration platform, covering multiple aspects such as chip design, advanced packaging, and networking. The Broadcom XPU platform enables tight coupling of logic units, memory, and high-speed input/output interfaces, supporting current deployments and establishing a highly scalable joint development roadmap for future generations. Broadcom President and CEO Hock Tan said that this initial MTIA deployment is just the beginning of a sustained multi-generation roadmap, which will serve large-scale growth needs for years to come, demonstrating Broadcom's leadership in AI networking and the support capabilities of its XPU custom accelerator platform.

In terms of network infrastructure, Broadcom's Ethernet technology will be used to connect Meta's continuously expanding AI compute clusters. The high-radix Ethernet switches, optical interconnect products, PCIe switches, and high-speed SerDes technology provided by Broadcom will build a low-latency network fabric at a scale of tens of thousands of nodes, alleviating network congestion in high-load AI scenarios. This network architecture covers three types of requirements: vertical scaling within racks, horizontal scaling between nodes, and cross-domain interconnection, providing the connectivity foundation for the large-scale deployment of MTIA chips.

Given the scale of this expanded collaboration, Broadcom President and CEO Hock Tan will step down from Meta's board of directors and transition to an advisory role at Meta, focusing on guiding Meta's custom chip roadmap and infrastructure investments. Tan joined Meta's board in early 2024 to assist Meta in strengthening its hardware infrastructure and supply chain layout. This role adjustment signifies a shift in the partnership from capital alignment towards deeper technological and business collaboration. Meta also announced that Tracey Travis, who has served as a director since 2020, will not seek re-election at the company's annual shareholder meeting.

As AI drives a surge in compute demand, tech giants like Meta, Google, and Amazon are developing their own chips to reduce reliance on Nvidia's expensive processors. The custom chip boom has made Broadcom one of the key beneficiaries in the generative AI field, as the company develops custom processors and provides infrastructure software for clients. By positioning the MTIA strategy as a core pillar of its chip ecosystem, Meta aims to continuously optimize performance, power consumption, and scaling costs, providing the compute power to support real-time generative AI services on products like WhatsApp, Instagram, and Threads.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com