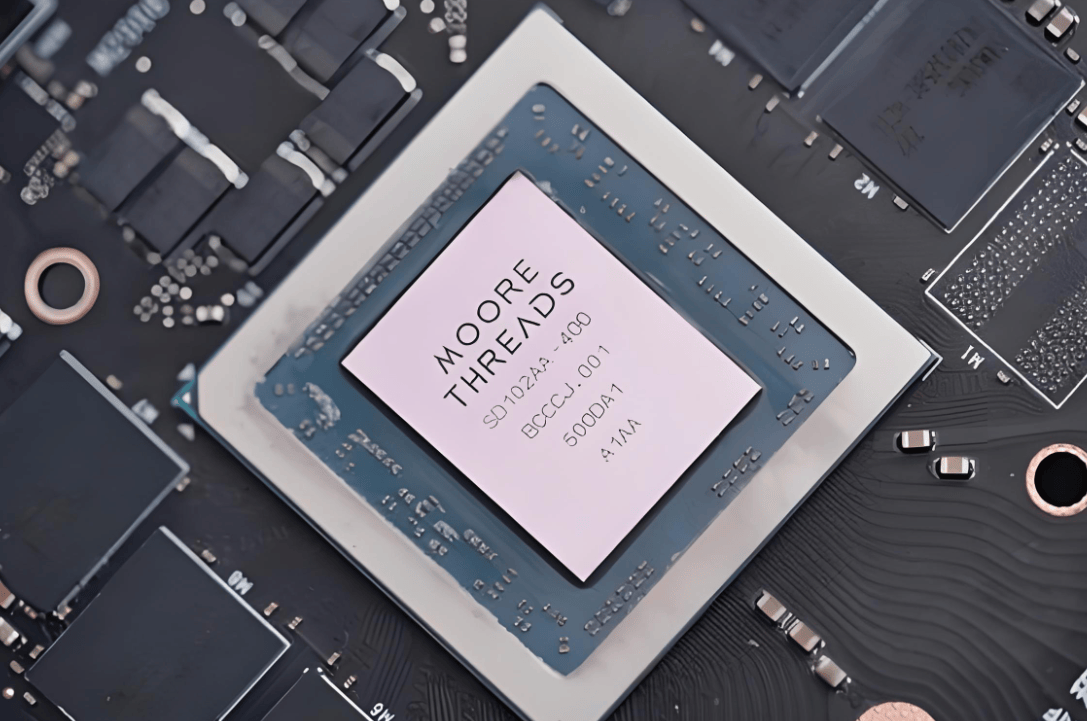

en.Wedoany.com Reported - On April 24, Moore Threads announced that TileLang-MUSA, a project deeply optimized based on TileLang version 0.1.8 and fully deployed on MUSA architecture full-feature GPUs, has achieved "Day-0" support for the latest TileLang operator library TileKernels for DeepSeek-V4. Moore Threads also disclosed that the unit test coverage for the native TileLang operators based on the MUSA architecture has exceeded 95%, establishing a directly reusable engineering foundation for rapid migration, verification, and performance optimization of key operators for large models.

On the same day, DeepSeek officially released and open-sourced the DeepSeek-V4 series of models, including two versions: DeepSeek-V4-Pro and DeepSeek-V4-Flash, both natively supporting a 100 million token context. The TileKernels operator library, released in conjunction with this model, targets core operator scenarios for large language models, adhering to a design philosophy of high performance, composability, and verifiability. In terms of computational density and memory bandwidth, most operators are already approaching the theoretical limits of the hardware. Moore Threads completed the adaptation and functional verification of TileKernels on the very day of the model's release, allowing developers to directly call these highly optimized kernels on MUSA architecture GPUs without any additional waiting.

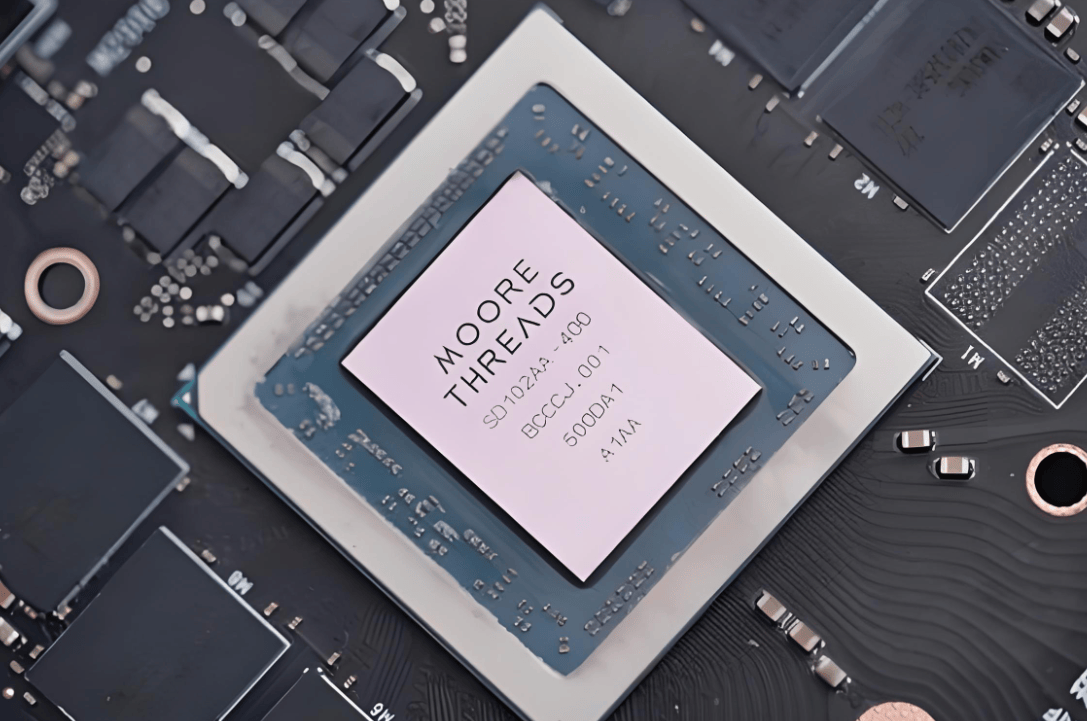

On February 10, 2026, Moore Threads first open-sourced the TileLang-MUSA project, achieving functional integration across multiple generations of full-feature GPUs such as MTT S4000 and MTT S5000. Operator code written based on TileLang is approximately 90% less than handwritten MUSA C++ versions, with an operator coverage rate of 80% at that time. After more than two months of continuous iteration, unit test coverage has increased to over 95%, covering core operators in Transformer models such as matrix operations, attention mechanisms, and normalization. TileLang itself is a domain-specific language based on tensor tiling abstractions, featuring a Python-like frontend and declarative syntax. Its compiler can automatically perform loop optimizations, memory scheduling, and code generation, significantly lowering the barrier to entry for GPU heterogeneous computing while maintaining cross-platform portability. As the MUSA architecture implementation of TileLang, TileLang-MUSA is kept highly synchronized with the upstream community codebase, enabling it to inherit the latest features and performance optimizations in a timely manner.

The inference deployment of DeepSeek-V4-Flash also achieved Day-0 availability. Moore Threads, in collaboration with the Beijing Academy of Artificial Intelligence (BAAI) FlagOS community, completed the rapid adaptation of this model on MTT S5000 GPUs. Built on the fourth-generation MUSA architecture "Pinghu," the MTT S5000's single-card AI computing power can reach up to 1000 TFLOPS. It is equipped with 80GB of HBM2e memory, an inter-card interconnect bandwidth of 784GB/s, and full support for FP8, FP16, BF16, FP32, and FP64 floating-point precision. Under FP8 precision, memory bandwidth requirements are reduced by 50% compared to traditional BF16 or FP16, while theoretical computational throughput is doubled, offering significant performance and cost advantages for large model inference scenarios.

From GLM-5.1 and MiniMax M2.7 to DeepSeek-V4, Moore Threads has consistently achieved rapid support on the day of release for cutting-edge large models, both in terms of operator libraries and model inference deployment. This rapid migration pathway for key operators of large models, along with a highly mature operator toolchain and robust software-hardware co-optimization capabilities, has formed a closed-loop system through multiple rounds of technical validation.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com