en.Wedoany.com Reported - The Seattle-based Allen Institute for Artificial Intelligence (Ai2) officially open-sourced its next-generation robot foundation model, MolmoAct 2, on May 5, 2026, local time. While retaining the fully open-stack characteristics of its predecessor, it increases real-world task processing speed by 37 times and surpasses commercially available closed-source robot models in multiple benchmarks. Ai2 simultaneously released the world's largest open-source dataset for bimanual manipulation, MolmoAct 2-Bimanual YAM, containing over 720 hours of dual-arm collaborative demonstration data.

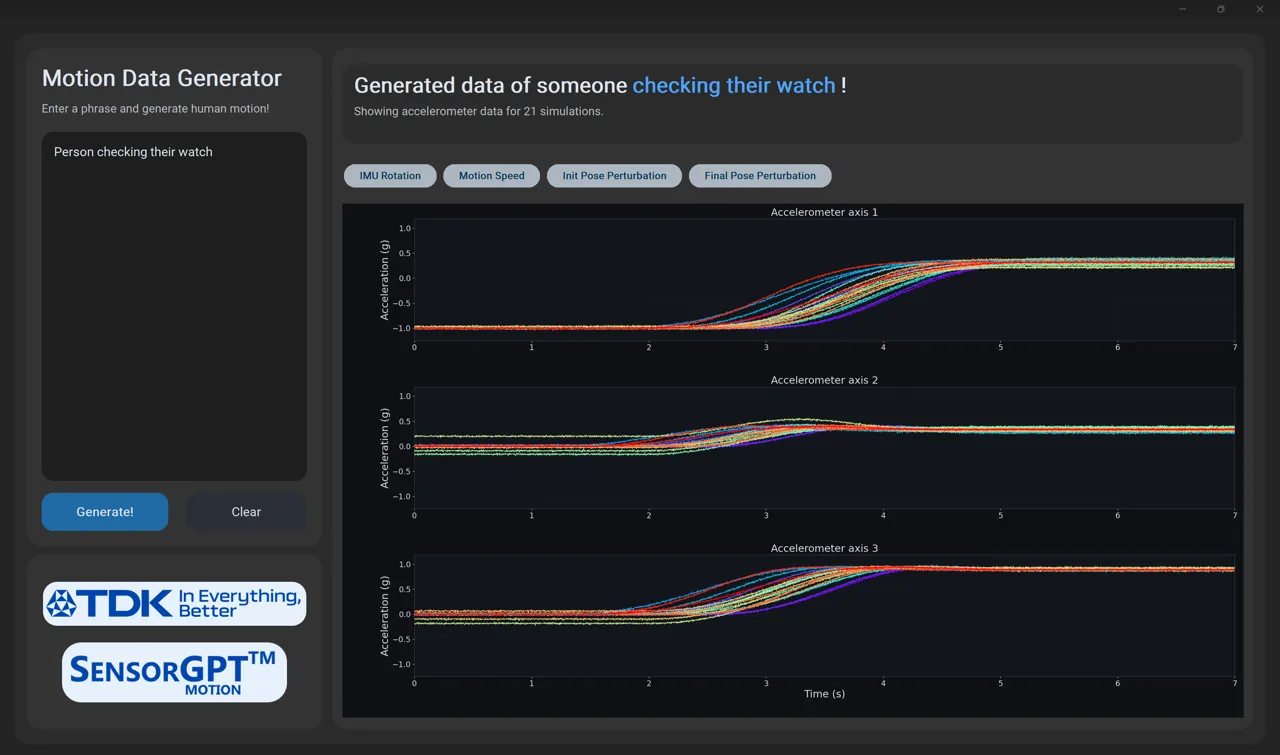

MolmoAct 2 is not a simple extension of the previous model but a ground-up architectural rebuild based on Molmo 2-ER, a vision-language backbone specifically designed for embodied reasoning. The training data encompasses over 3 million samples, covering tasks such as image pointing, object detection, abstract spatial reasoning, multi-image reasoning, and spatial question-answering based on images and videos. This training system enables MolmoAct 2 to internally integrate a dedicated "action expert" module that generates robot action commands through 3D spatial reasoning, thereby eliminating redundant steps in the decision-making pathway. Ai2 explains that the 37x acceleration refers not to the speed of a single inference computation, but to the end-to-end task completion efficiency from receiving instructions to executing physical actions, meaning the model has significantly improved in both "understanding what to do" and "planning how to do it."

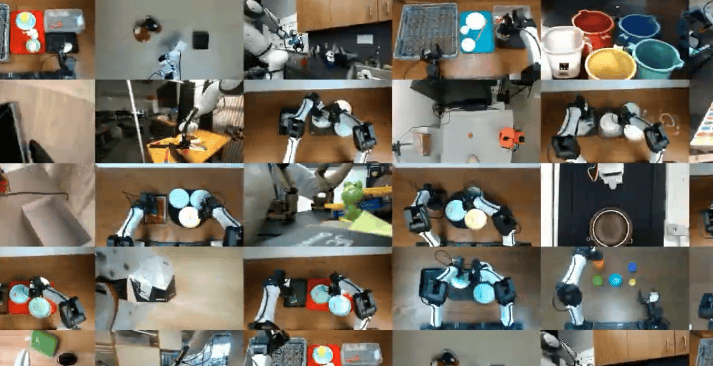

The dataset open-sourced alongside the model also targets industry pain points. In robotics, bimanual manipulation specifically refers to two robotic arms collaborating to complete a single task, such as folding towels, scanning goods, charging a phone, or clearing a tabletop—actions requiring precise coordination rather than independent operation. With over 720 hours of demonstration data, MolmoAct 2-Bimanual YAM is currently the largest publicly available dataset of its kind. The Ai2 research team also re-annotated the robot data, increasing the number of unique labels from approximately 71,000 to about 146,000, while compressing duplicate instructions and low-quality annotations to improve the diversity of language commands. Furthermore, the dataset incorporates additional data from various robot arm types, camera configurations, control schemes, and task styles to enhance the model's generalization capabilities across different hardware and scenarios.

In the real-world validation phase, Ai2 collaborated with the Cong Lab at Stanford University School of Medicine to conduct a preliminary assessment of MolmoAct 2's reliability in a gene editing wet lab setting. Wet lab work involves extensive benchtop operations, including moving between workstations, high-precision pipetting, and equipment handling, where accumulated errors can quickly render an entire batch of experiments unusable. After multiple rounds of practical testing, the Stanford team found that MolmoAct 2 demonstrated reliable potential in assisting with wet lab operations. Ai2 also subjected the model to stress tests, evaluating its performance under conditions such as rephrased instructions, object displacement, distractors, and object substitution. The company acknowledged that the model still has limitations in situations where the camera is occluded by the gripper or the robot arm's speed cannot match the control system's cadence.

As a non-profit research institution, Ai2 has chosen to fully open-source all model weights, training code, and complete training data for MolmoAct 2, allowing researchers to conduct secondary development and adaptation. The model is specifically trained and adapted for mainstream, low-to-medium-cost commercial and research robots, enabling ordinary academic labs and independent researchers to use it directly out of the box. In the open-source ecosystem, MolmoAct 2's performance on 13 embodied reasoning benchmarks surpasses closed-source models like GPT-5 and Gemini Robotics ER-1.5, providing the entire robot learning community with a reproducible research baseline through its technical approach.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com