en.Wedoany.com Reported - Silicom Ltd., a provider of networking and data center infrastructure solutions headquartered in Kfar Sava, Israel, officially announced on May 5, 2026, local time, that an AI infrastructure startup has selected its high-performance inference-dedicated solution for a Proof-of-Concept project primarily targeting a Tier-1 hyperscale cloud service provider. The PoC is scheduled to commence in the second half of 2026. To support this effort, the customer has placed an initial order in advance, with delivery expected in the first half of 2026.

According to the announcement, upon successful completion of the PoC, the customer is expected to transition to an initial scaled deployment phase, which would require tens of thousands of Silicom units, each priced at several thousand dollars. Silicom CEO Liron Eizenman stated in the official release that the AI industry is undergoing a structural shift from capital-intensive AI training build-outs to large-scale global inference deployment, and inference will soon account for the vast majority of AI computing spending. As query volumes grow exponentially, traditional general-purpose GPUs are no longer the most scalable path in pure inference scenarios. The industry is turning to specialized architectures that can significantly reduce total cost of ownership per token, improve energy efficiency, and optimize performance. Silicom's early strategic collaborations validate the company's technological advantages.

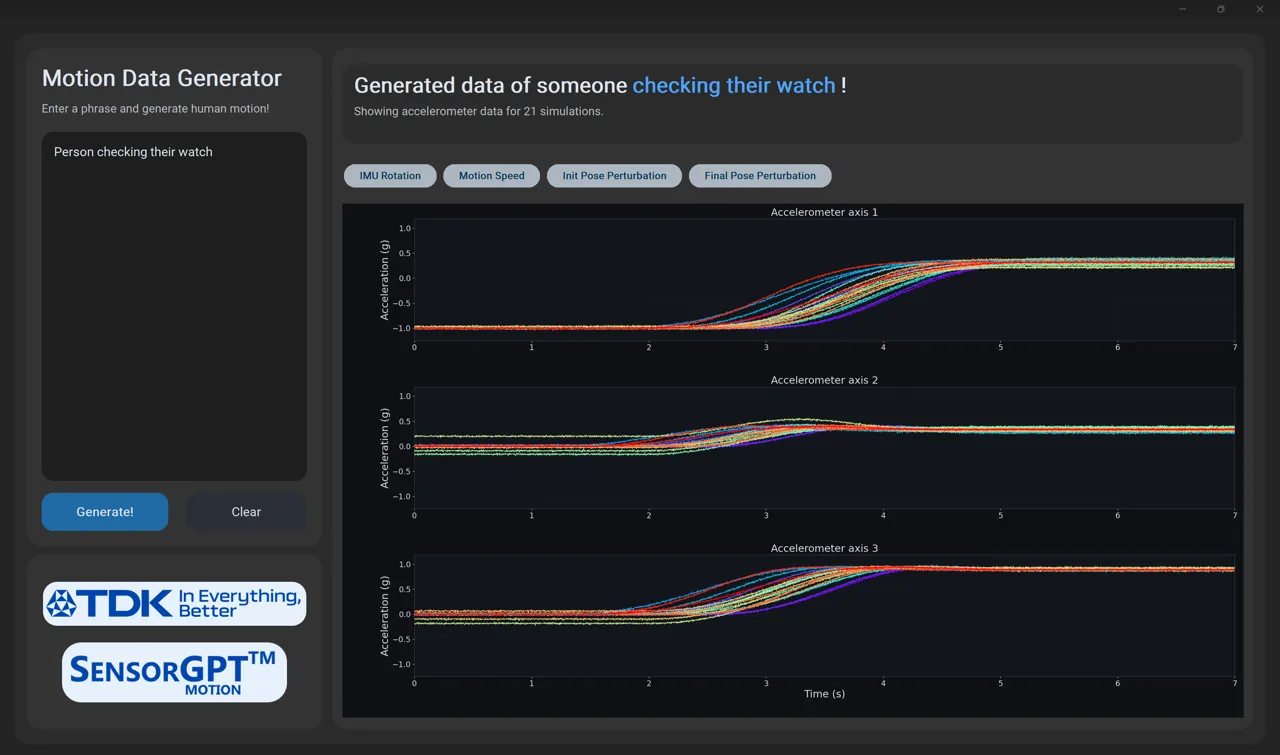

From a technical roadmap perspective, Silicom's inference solution is based on a combination of adaptable advanced FPGA architectures and high-performance commercial networking chips, helping AI hardware developers break through the "latency wall" and avoid the "hardware lottery" risk. The solution is designed for cloud, data center, and edge environments, aiming to increase throughput and reduce latency, covering scenarios such as AI inference, SD-WAN, cybersecurity, fiber switching, and network function virtualization. The company has currently accumulated over 400 active Design Wins globally.

Market demand for dedicated AI inference hardware is rapidly escalating. Silicom's receipt of this initial order coincides with the global shift in AI computing spending focus from training to inference, with hyperscale cloud service providers maintaining high levels of capital expenditure. As inference scenarios impose more stringent requirements on per-token cost, power consumption, and real-time performance, dedicated inference acceleration solutions are becoming a key differentiator in competition. The company had previously received orders for different inference products from two globally leading AI computing enterprises and recently secured an order for the development and delivery of a third inference-dedicated product, indicating that its market position in this field is gradually being validated by the industry.

On the financial front, Silicom demonstrated accelerated growth momentum in the first quarter of 2026, with quarterly revenue of $19.1 million, a year-over-year increase of 33%. Its second-quarter revenue guidance is $20 million to $21 million, representing a year-over-year increase of up to 40%. Full-year revenue is expected to be $82 million to $83 million, a year-over-year increase of approximately 33%. Eizenman stated during the earnings call that the company's core business has reached a clear inflection point, with growth momentum spanning all product lines and regional markets.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com