en.Wedoany.com Reported - Japan's TDK Corporation officially launched its SensorGPT data synthesis technology on May 5, 2026, at the Sensors Converge 2026 exhibition held in Santa Clara, California, USA. The technology leverages generative AI, signal processing, statistical methods, and physical simulation to create and manage sensor data at scale, aiming to reduce the workload of the edge AI development process from the previous 80% to approximately 10%, and shorten the model building cycle from months to weeks.

The core breakthrough of SensorGPT lies in elevating the similarity between synthetic data and real sensor data to 90%. This level of accuracy means that synthetic datasets can already replace most real-world data collection work and be directly used for the training and validation of edge AI models. Jim Tran, Deputy General Manager of Technology and IP Headquarters at TDK U.S.A. Corporation, pointed out in the official press release that data is the foundation of intelligent edge systems, but current data collection consumes far more time than building the intelligence itself, with nearly 80% of AI solution development time spent on data collection and preparation. Through generative AI modeling, simulation, and other methods, engineers can use AI to generate additional high-quality data reflecting real-world conditions, transforming data into a scalable resource. Once a model is deployed in the field, real-world data will continuously feed back into the synthetic model, forming a positive cycle of "deployment—feedback—optimization" that gradually strengthens the performance of the synthetic engine.

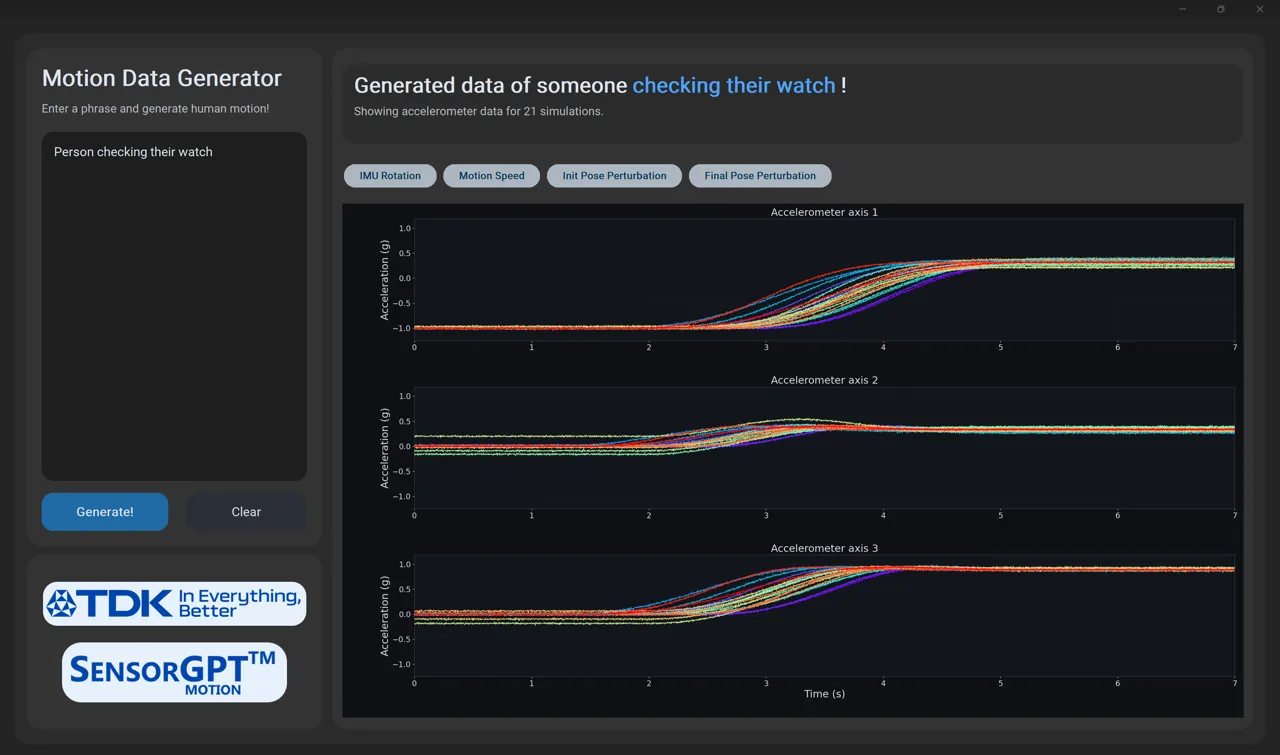

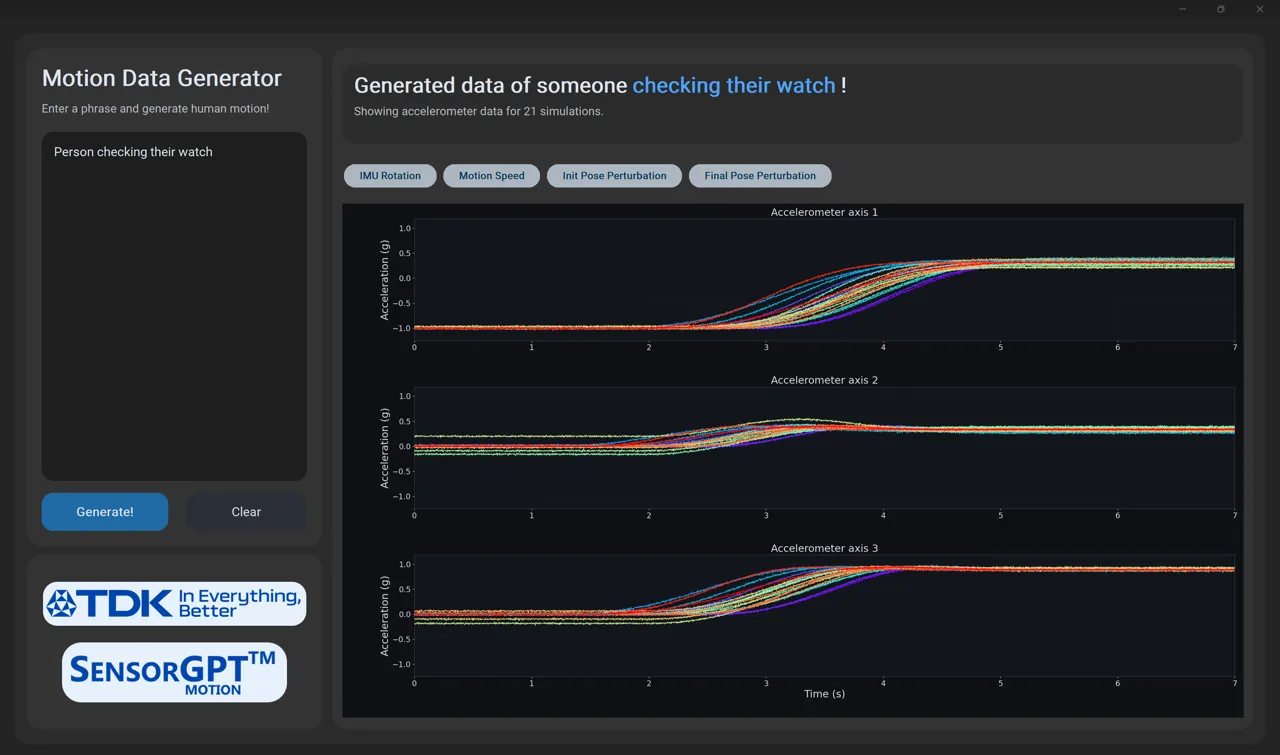

On the technical path, SensorGPT is driven in parallel by five layers of technology modules. The generative AI model is trained on limited real data to learn underlying patterns and generate high-fidelity synthetic data; the physical simulation model simulates sensor behavior based on mathematical and physical principles; signal processing methods use mathematical and computational techniques to simulate the dynamic characteristics of real sensor outputs; data augmentation technology automatically transforms existing data to expand coverage across more scenarios and operating conditions; and assisted annotation simplifies the labeling process for training data, enhancing the usability and quality of the training set.

Examining this from the market demand side, the urgency for this technology is rapidly increasing. According to Gartner's forecast, by 2026, at least 50% of edge computing deployments will involve machine learning, compared to only 5% in 2022. Meanwhile, approximately 80% of enterprise AI development time is spent on data preparation rather than model building. SensorGPT addresses this industry-wide bottleneck at the source of data supply—providing a scalable foundation at the data layer for the large-scale deployment of edge AI, enabling edge AI projects previously shelved due to a lack of sufficient high-quality data to be accelerated into implementation.

SensorGPT and the SensorStage evaluation platform, simultaneously released by TDK, jointly target the two major markets of smart IoT and emerging ambient IoT. SensorStage provides an integrated development environment for MEMS sensors such as SmartMotion inertial measurement units, while SensorGPT solves the problem of scaling data supply. Together, they form a full-link tool system from sensor evaluation to AI model training. The current target applications of this technology already cover areas such as IoT, wearable devices, mobile terminals, physical AI, and industrial IoT.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com