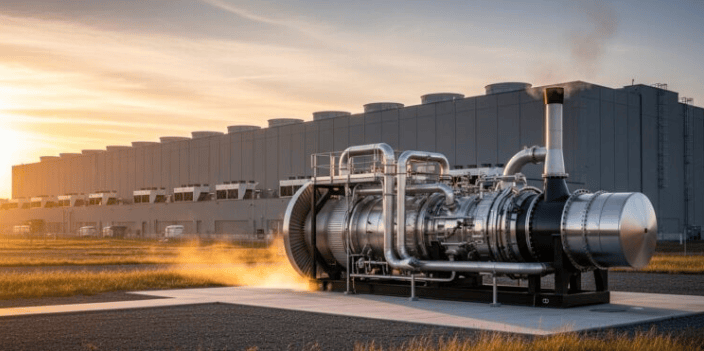

en.Wedoany.com Reported - NVIDIA has announced the launch of Fleet Intelligence, a GPU cluster monitoring service. This is a managed service designed for large-scale GPU clusters in artificial intelligence infrastructure, providing real-time operational visibility, health monitoring, and integrity verification. The service is now available free of charge to customers using NVIDIA data center GPUs based on Hopper, Blackwell, and Vera Rubin systems. It can operate independently across heterogeneous infrastructure environments, unrestricted by orchestration stacks or schedulers.

The platform streams GPU telemetry data to a cloud service hosted on NVIDIA NGC via a lightweight host agent, which integrates technologies such as GPUd, NVIDIA Data Center GPU Manager, and the NVIDIA Attestation SDK. NVIDIA has also released the Fleet Intelligence agent as open source on GitHub, making it easier for operators to audit the telemetry pipeline and collected data. Fleet Intelligence aggregates telemetry data including GPU utilization, memory bandwidth, power consumption, NVLink status, thermal conditions, ECC errors, and hardware reliability metrics, helping operators identify underutilized resources, detect faults early, and reduce downtime in large AI clusters.

This launch places a key focus on integrity and attestation capabilities derived from NVIDIA Confidential Computing technology. Fleet Intelligence uses NVIDIA Root of Trust certificates and the NVIDIA Remote Attestation Service to perform cryptographic verification of GPU firmware and runtime integrity. It can also confirm whether a GPU is running approved firmware and untampered configurations through a reference integrity manifest associated with the vBIOS version. NVIDIA stated that the service incorporates its operational experience from DGX Cloud deployments involving hundreds of thousands of GPUs. Early access customers include Lambda and IREN, both of which provided operational feedback during the development process.

Fleet Intelligence supports Hopper, Blackwell, and Vera Rubin GPUs, though GPU attestation is currently only supported on Vera Rubin and Blackwell architectures. Telemetry data covers GPU, CPU, NVLink, PCIe, network, power, and thermal metrics. The service supports email, Slack, and custom alert integrations, with health checks leveraging GPUd and DCGM technologies. The agent runs in read-only mode and does not modify host configurations. The service includes historical reporting, inventory dashboards, and anomaly visualization features. NVIDIA has released the agent as open source to enable auditability and provides it free of charge to NVIDIA data center GPU operators and cloud tenants.

According to Chuan Li, Chief Science Officer at Lambda: "NVIDIA Fleet Intelligence gives Lambda's research team end-to-end visibility across our NVIDIA Blackwell/Hopper GPU clusters with minimal setup. Its alerts capture both active failures and early warning signals. Its reports translate the health of the entire cluster into actionable insights." Fleet Intelligence serves as a deployment-agnostic telemetry and monitoring layer suitable for various infrastructure environments, independent of the user's chosen orchestration stack or scheduler.

Analysts believe NVIDIA is expanding from the GPU chip sector into operational software and infrastructure management tools for AI factories. Fleet Intelligence complements its AI infrastructure stack, which already includes DGX systems, NVLink fabric, Spectrum-X networking, Mission Control orchestration, and Confidential Computing technology. As AI clusters scale to tens of thousands of accelerators, demand for higher GPU utilization from hyperscale clouds and enterprises continues to grow. This launch also reflects intensifying competition in the AI infrastructure observability and GPU operations space, with vendors including AMD, Intel, and several startups building their own telemetry, reliability, and orchestration frameworks. By directly integrating hardware telemetry, firmware attestation, and operational analytics into its platform stack, NVIDIA solidifies its position as a vertically integrated AI infrastructure provider.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com