US tech giant Google recently launched a new AI model, Gemini 3.1 Flash-Lite. This model has undergone significant optimization in terms of cost and speed, primarily targeting enterprises and developers, aiming to provide scalable intelligent solutions.

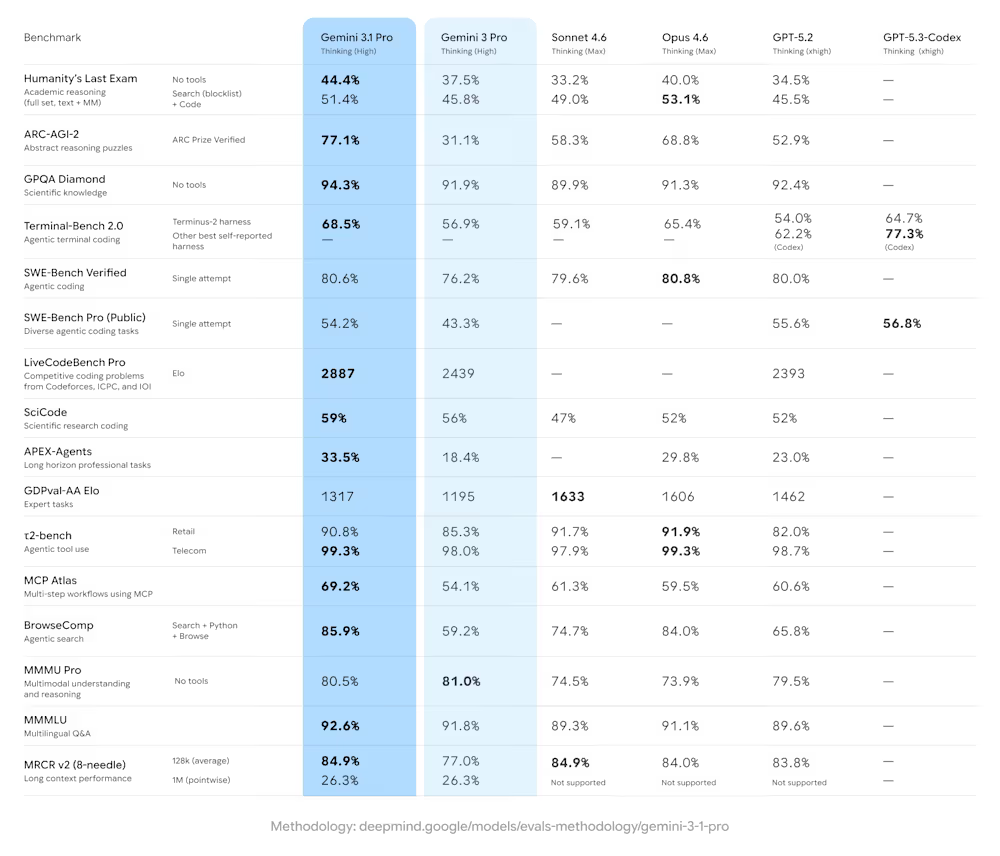

Gemini 3.1 Flash-Lite is positioned as the model with the highest cost-effectiveness and response speed within the Gemini 3 series. Its release comes just weeks after the debut of the high-performance model Gemini 3.1 Pro in February, completing Google's tiered strategy to help enterprises scale intelligent capabilities across infrastructure layers.

In high-throughput AI applications, latency is a key metric affecting user experience. Flash-Lite is designed for instant response. According to internal benchmarks and third-party evaluations, its time to first token is 2.5 times faster than the previous generation Gemini 2.5 Flash, with overall output speed increasing by 45%, reaching 363 tokens per second.

The model introduces a thinking-level capability, allowing developers to dynamically adjust reasoning intensity. For simple tasks, it can be lowered to prioritize speed and cost; for complex applications, such as code exploration or simulation creation, it can be increased for deep reasoning.

Despite the "Lite" in its name, performance data shows its capabilities are comparable to larger systems. On the Arena.ai leaderboard, Flash-Lite achieved an Elo score of 1432, competing with models having more parameters. Key benchmark results show it achieved 86.9% in scientific knowledge, 76.8% in multimodal understanding, and 88.9% in multilingual question answering.

Structured output compliance is a strength of Flash-Lite, scoring 72.0% on the LiveCodeBench benchmark, outperforming some competitors, while also supporting complex chart synthesis and video knowledge extraction.

Compared to Gemini 3.1 Pro, Flash-Lite focuses more on high-volume execution, handling daily tasks like translation and moderation, while the Pro model excels at deep reasoning and complex coding. Through a cascading architecture, Google allows enterprises to use Pro for initial planning and then hand off repetitive tasks to Flash-Lite at low cost.

In terms of cost, Gemini 3.1 Flash-Lite is priced at $0.25 per 1 million input tokens and $1.50 per 1 million output tokens, cheaper than competitors like Claude 4.5 Haiku. Compared to Gemini 3.1 Pro, in high-context usage, Flash-Lite is 12 to 16 times cheaper.

Early testers provided positive feedback. Andrew Carr, Chief Scientist at Cartwheel, noted: "3.1 Flash-Lite is a very capable model. It's incredibly fast, yet still somehow follows all instructions... its intelligence-to-speed ratio is unmatched by any other model." Kolby Nottingham, Head of AI at Latitude, shared that the model increased success rates by 20% and reduced reasoning time by 60%.

Gemini 3.1 Flash-Lite and Pro are available through Google AI Studio and Vertex AI, following a commercial software-as-a-service model. Currently, Flash-Lite is in preview, allowing Google to refine performance based on feedback. For developers, transitioning to the new model represents a performance upgrade at the same or lower price point.

This release by Google marks a new phase in the AI race. By combining the deep reasoning of the Pro model with the efficient execution of Flash-Lite, it provides enterprises with reliable, instant AI solutions, lowering the barriers to scaling intelligence.