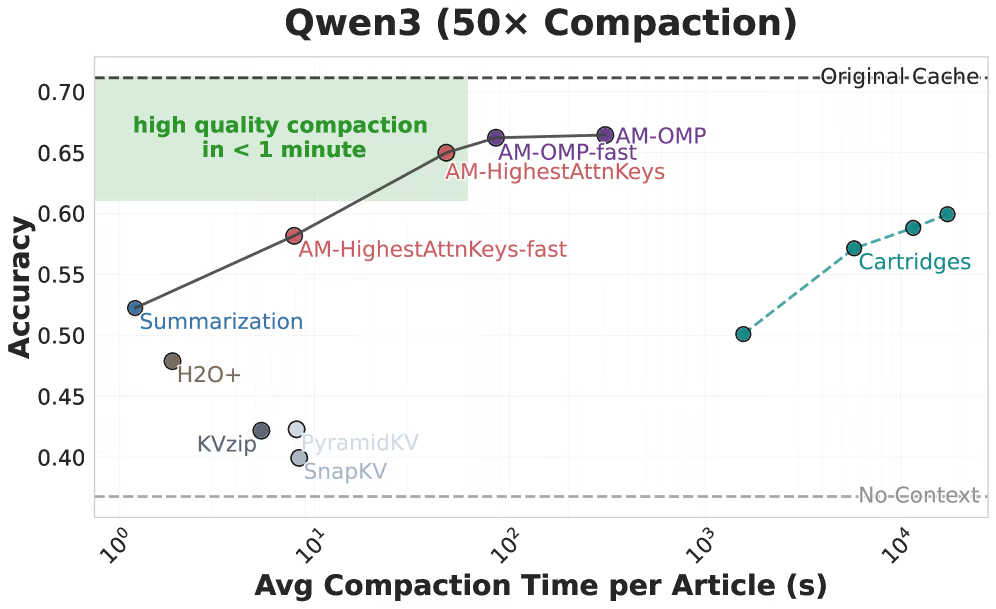

Wedonay.com Report on Mar 7th, Researchers at the Massachusetts Institute of Technology (MIT) have developed a new technique called "attention matching," which can reduce the memory requirements of large language models by up to 50 times by compressing the KV cache while maintaining accuracy. This provides an efficient solution for enterprise AI applications that handle large documents and long-term tasks.

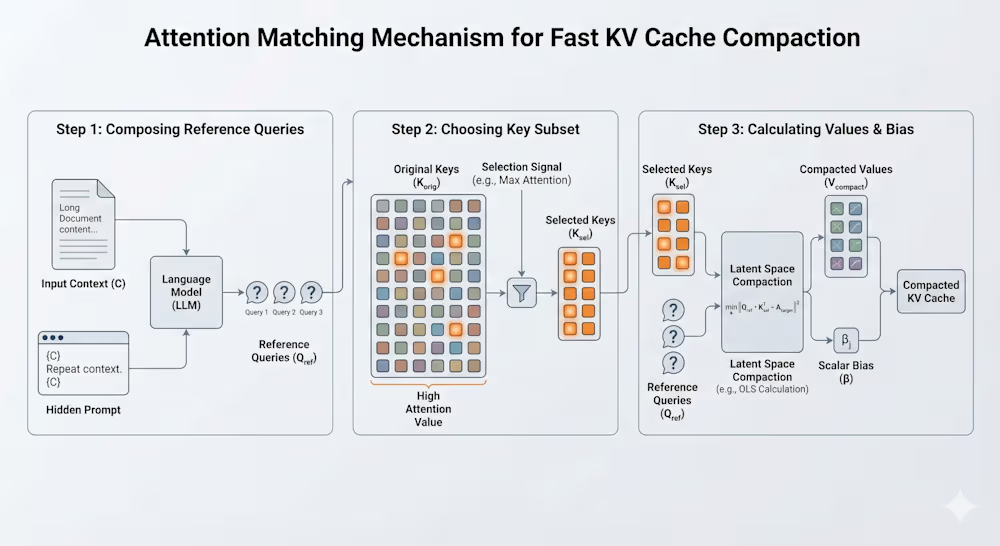

When processing long contexts, the KV cache of large language models expands with the conversation length, consuming significant hardware resources and becoming a memory bottleneck. The attention matching technique preserves two mathematical properties, "attention output" and "attention quality," and uses reference queries and algebraic methods for rapid compression. This avoids gradient-based optimization, achieving a high compression ratio and quality.

In tests, attention matching performed excellently on the QuALITY and LongHealth datasets, maintaining accuracy even after 50x compression, and processing documents took only a few seconds. Co-author Adam Zweiger said, "In some sense, attention matching is the 'right' goal for performing latent context compression because it directly targets preserving the behavior of each attention head after compression."

The code for the attention matching technique has been released, but it requires access to model weights, and integrating it into existing systems requires engineering effort. Zweiger noted, "We think compression after ingestion is a promising use case where large tool call outputs or long documents are compressed immediately after processing." This technology is expected to advance the development of AI models in memory optimization.