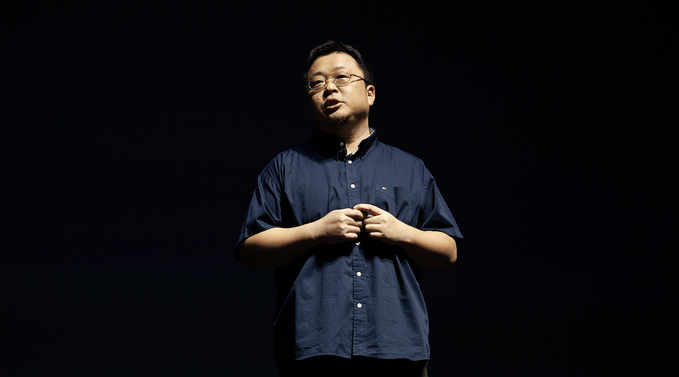

en.Wedoany.com Reported - At a media briefing on April 15, 2026, Li Wentao, Director of Cloud Computing Architecture for Akamai's Asia-Pacific region, announced that the company has already deployed NVIDIA RTX 6000 Pro Blackwell series GPUs on a large scale globally, currently covering over 130 countries and more than 4,400 edge nodes. Within the next year, the number of nodes with GPU capabilities will be expanded to hundreds, aiming to achieve low-latency AI inference response in the 10 to 20 millisecond range. Li Wentao also revealed that through semantic caching technology, the cost of repeated inference can be reduced by 50% to 80%, and the egress traffic pricing for token-based outbound data is set at $0.005 per GB.

This GPU deployment leverages Akamai's extensive global edge network infrastructure. As a world-leading cybersecurity and cloud computing company, Akamai invented the CDN technology architecture in 1998, originating from the Massachusetts Institute of Technology, with its headquarters located in Boston, USA. As of December 31, 2025, its global distributed infrastructure includes core and distributed computing sites, over 4,300 edge access points, covering more than 130 countries and approximately 700 cities. Its underlying global network integrates around 1,200 network partners. The company's total revenue for 2025 reached $4.208 billion, with security business exceeding 50% to reach 53.3%, and cloud infrastructure services achieving an annual growth rate of 45%.

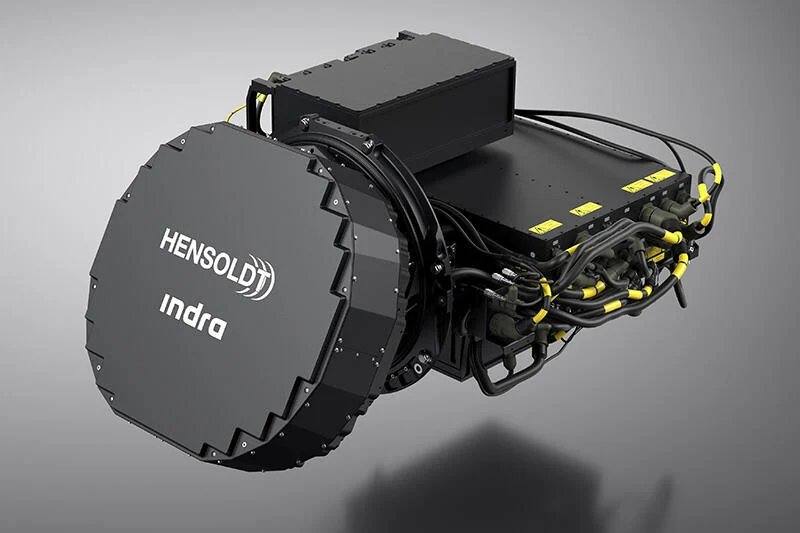

In terms of GPU hardware configuration, the NVIDIA RTX 6000 Pro Blackwell server GPUs deployed by Akamai are equipped with 96GB of GDDR7 memory. Based on the Blackwell architecture, they integrate fifth-generation Tensor Cores and fourth-generation RT Cores. Akamai's official benchmarks show that this GPU running on Akamai Cloud can achieve inference throughput 1.63 times higher than the H100, reaching 24,240 TPS per server under 100 concurrent requests. The FP4 precision mode of the RTX 6000 Pro Blackwell provides excellent throughput at significantly lower power consumption and cost compared to data center-grade GPUs, making it suitable for scaled deployment across hundreds of sites.

This GPU node expansion is a further implementation of Akamai's "Inference Cloud" strategy. Akamai Inference Cloud integrates NVIDIA RTX PRO servers with Akamai's distributed cloud infrastructure and global edge network, deploying AI inference capabilities closer to where user data resides. The platform supports concurrent multimodal workloads such as text, vision, and speech, which can be completed on a single GPU, reducing reliance on dedicated accelerators. NVIDIA data indicates that RTX Pro servers offer several-fold improvements over the previous generation in areas like LLM inference throughput, synthetic data generation, genomic sequence alignment, engineering simulation, and rendering performance.

Regarding security protection, Akamai has launched the Firewall for AI, a dedicated firewall that provides proactive input/output protection against generative AI-specific threats such as prompt injection, confidential data leakage, and AI hallucinations. The company has also partnered with NVIDIA to introduce an agentless zero-trust segmentation solution based on BlueField DPUs, offering hardware-level isolation protection for operational technology systems in industries like power, energy, and transportation where installing software agents is not feasible. Akamai predicts that future networks will evolve into "agentic networks," where AI agents will autonomously execute complex decisions and transactions, and its globally most distributed platform will become the foundation for hosting these applications.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com