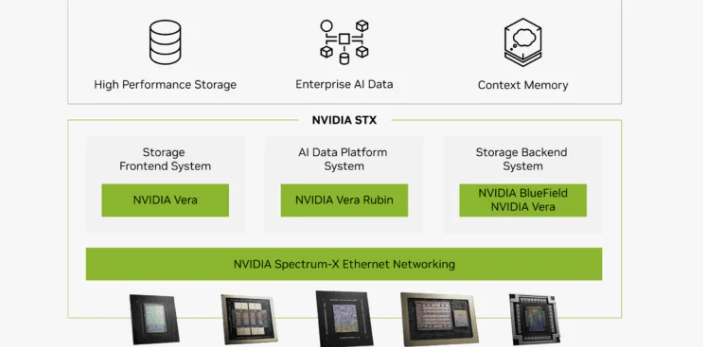

en.Wedoany.com Report on Mar 23rd, NVIDIA has launched a new data pipeline architecture in the US called BlueField-4 STX. Centered on the BlueField-4 DPU platform, this architecture aims to address the inefficiencies in data movement between storage, memory, and GPUs in large-scale AI systems.

The BlueField-4 STX architecture tightly integrates storage, networking, and memory operations with GPU computing to accelerate data access for inference and agent AI workloads. Instead of relying on traditional host-based data paths, it leverages the DPU to directly offload and orchestrate data movement, thereby delivering model weights, context, and key-value cache data to GPUs faster.

As AI infrastructure expands from training to large-scale inference, workloads are increasingly dominated by memory access patterns, context scaling, and token generation, rather than raw computation. In these scenarios, GPUs are often underutilized due to data delivery delays, especially when processing large language models that require rapid access to distributed datasets and memory pools.

NVIDIA's BlueField-4 STX architecture reduces latency, increases throughput, and boosts GPU utilization within AI clusters by combining high-speed networking, direct NVMe access, and memory-aware data orchestration within the DPU. The platform also supports advanced data services like prefetching, caching, and direct data placement, which are crucial for multi-step inference and agent workflows.

This announcement aligns with NVIDIA's efforts to define the "AI factory" as a fully integrated system where compute, networking, storage, and software are co-designed for maximum performance. In this model, the DPU plays a central role as the control point for data movement, security, and infrastructure offloading.

NVIDIA's launch of the BlueField-4 STX architecture to optimize AI data pipelines aims to accelerate inference and agent AI workloads. It uses the DPU to offload and orchestrate data movement from the CPU, integrating high-speed networking, NVMe storage access, and memory-aware operations. By reducing data delivery bottlenecks, it improves GPU utilization and supports advanced data services like caching, prefetching, and direct placement.

This announcement highlights a shift in priorities for AI infrastructure design. While the industry previously focused on GPU performance and interconnect bandwidth, as models grow larger and inference workloads become more complex, the speed of data delivery has become a limiting factor. In many deployments, GPUs often idle while waiting for data, an inefficiency that is more pronounced in workflows requiring frequent access to distributed data sources.

By moving data orchestration to the DPU layer, NVIDIA is repositioning the data pipeline as a critical component of AI infrastructure. This requires AI infrastructure vendors to not only address computational performance but also efficiently manage data movement within the system. The launch of BlueField-4 STX reinforces the importance of the DPU as a strategic control point, evolving into an infrastructure processor that handles storage access, security enforcement, and workload orchestration, becoming a core layer in next-generation AI data centers.

Looking ahead, the competitive landscape may expand to include data pipeline architectures, memory hierarchies, and storage integration. NVIDIA's move suggests that the next phase of AI infrastructure innovation will be defined by how systems deliver data to compute engines.