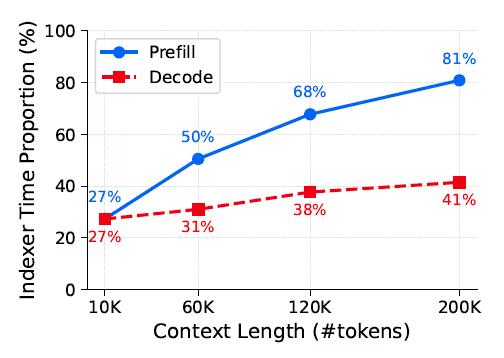

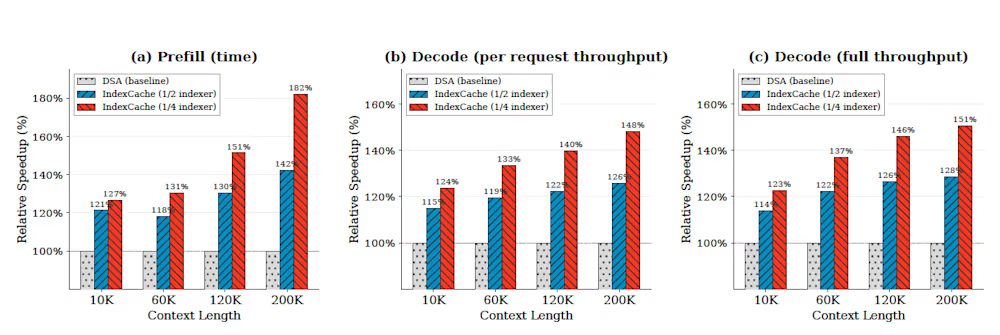

en.Wedoany.com Report on Mar 28th, Researchers from Tsinghua University and Z.ai have recently developed a technology called IndexCache, aimed at optimizing the long-context processing efficiency of large language models. By cutting up to 75% of redundant computation in sparse attention models, this technology accelerates the first token generation time by up to 1.82 times and improves generation throughput by 1.48 times under a context length of 200,000 tokens.

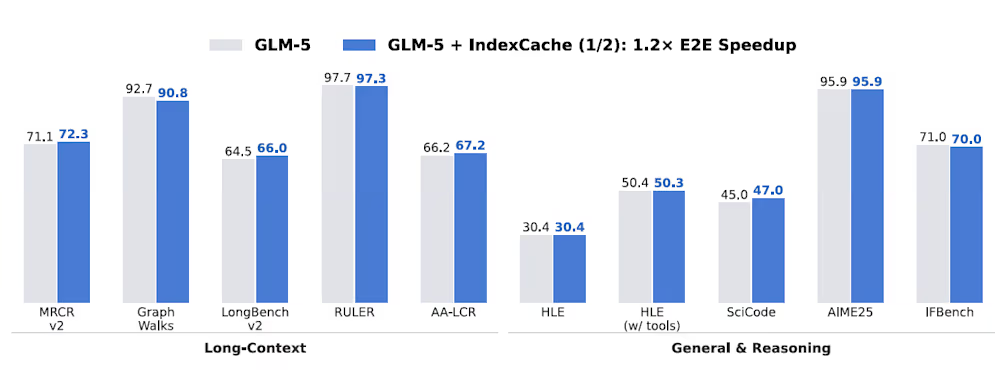

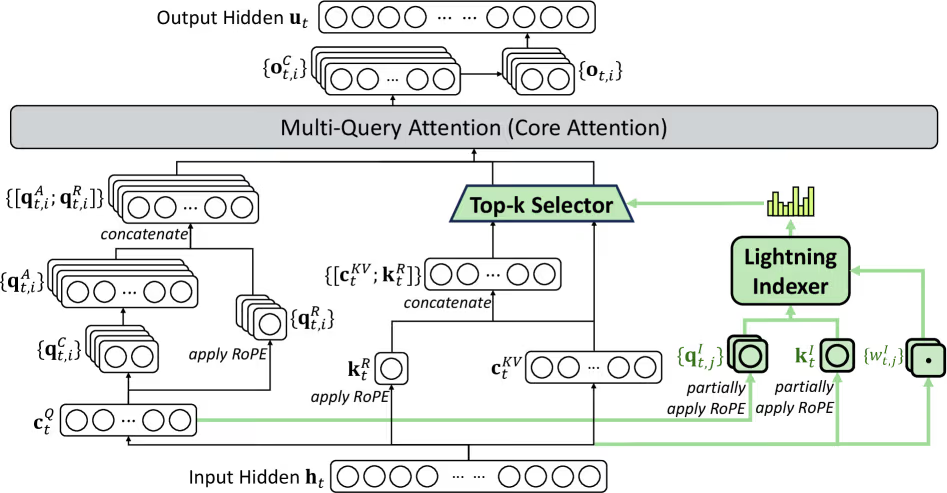

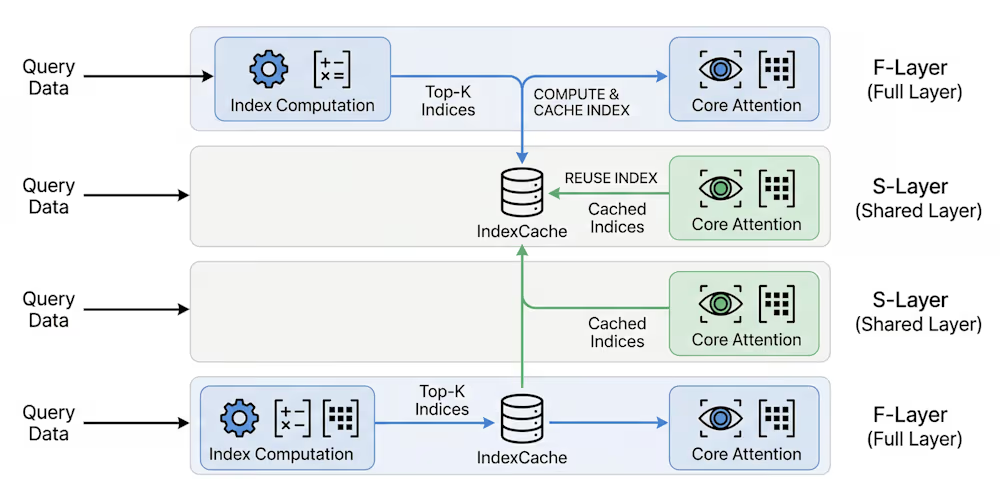

IndexCache is applicable to models using the DeepSeek sparse attention architecture, including the latest DeepSeek and GLM series. This technology has been preliminarily validated through tests on the GLM-5 model with 744 billion parameters, helping enterprises enhance the user experience of production-grade long-context models. The researchers found that in DeepSeek sparse attention models, the indexer itself operates with quadratic complexity, leading to slowdowns when processing long contexts. IndexCache addresses this by dividing model layers into full layers and shared layers, leveraging cross-layer redundancy to allow shared layers to reuse the cached index from the previous layer, thereby reducing the computational burden.

Paper co-author Bai Yushi told VentureBeat: "IndexCache is not a traditional KV cache compression or sharing technique. It eliminates redundancy by reusing indexes across layers, reducing computation rather than just memory footprint. It is complementary to existing methods." The technology offers two deployment approaches: a training-free method relies on a "greedy layer selection" algorithm to automatically optimize layer configuration, while a training-aware version optimizes network parameters through "multi-layer distillation loss" to support cross-layer sharing.

In tests on the 30-billion-parameter GLM-4.7 Flash model, IndexCache reduced prefill latency from 19.5 seconds to 10.7 seconds and increased decoding throughput from 58 tokens per second to 86 tokens per second. For enterprise teams, this translates directly into cost savings. Bai Yushi stated, "In long-context workloads such as RAG and document analysis, deployment costs are reduced by at least about 20%." The efficiency improvements do not compromise reasoning capability; the optimized model scored 92.6 on the AIME 2025 mathematical reasoning benchmark, outperforming the original baseline score of 91.0.

Development teams can access the open-source patch via GitHub for integration into existing inference stacks like vLLM or SGLang. Bai Yushi recommends calibration with domain-specific data to match actual workloads. This IndexCache technology not only addresses current computational bottlenecks but also drives AI model design towards optimizing inference efficiency.