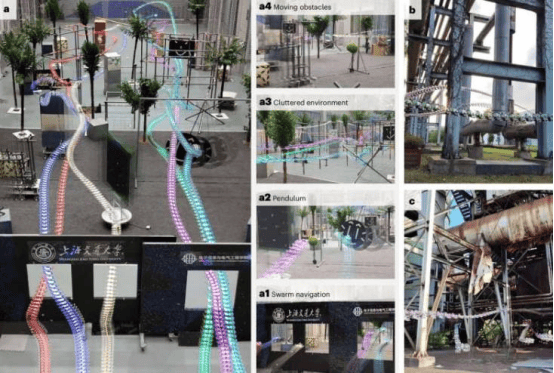

Researchers at Shanghai Jiao Tong University have published a new study in Nature Machine Intelligence proposing a novel method inspired by insect flight capabilities, enabling teams of multiple drones to autonomously navigate complex environments at high speeds. This breakthrough brings new opportunities for drone technology development in high-end equipment manufacturing.

Although drones are now widely used, most still require manual operation, and many struggle to fly in cluttered, crowded, or unknown environments without collisions. Drones capable of such flights often rely on expensive or bulky components.

Professors Zou Danping and Lin Weiyao from Shanghai Jiao Tong University stated that the research was inspired by the flight abilities of tiny insects such as flies. These small creatures, with their tiny brains and limited sensing capabilities, can perform agile and intelligent maneuvers. Replicating this level of flight control has long been a dream and major challenge in robotics, requiring tight integration of perception, planning, and control, while running on limited onboard computation.

Traditional methods for controlling multiple drones decompose autonomous navigation tasks into several independent modules. While effective, these approaches lead to error accumulation and delayed drone responses, increasing collision risks. Professors Zou and Lin explained that the main goal of the research was to explore whether lightweight artificial neural networks (ANNs) could replace classical pipelines with a compact end-to-end policy. The network takes sensor data as input and directly outputs control actions, imitating how flies use a small number of neurons to generate complex behaviors. The aim was to demonstrate that minimalist perception and computation can achieve high-performance autonomous flight.

The newly developed system relies on a lightweight artificial neural network that generates control commands for quadrotor aircraft based on 12×16 ultra-low-resolution depth maps. Despite the low input resolution, the network is sufficient to understand the environment and plan actions. The network was trained in a custom simulator, supporting both single-agent and multi-agent training modes, with an efficient training process.

A key advantage of this multi-drone navigation method is its reliance on highly compact, lightweight deep neural networks with only three convolutional layers. Tested on an embedded computing board priced at 21 USD, it runs smoothly and is energy-efficient. Training on an RTX 4090 GPU requires only 2 hours. It also supports multi-robot navigation without centralized planning or explicit communication, enabling scalable deployment in swarm scenarios.

A review of prior literature revealed that many deep learning algorithms for drone navigation have poor generalization in real-world scenarios, as they fail to account for unexpected obstacles or environmental changes and require large amounts of human-expert-labeled flight data for training. Incorporating the physical model of the quadrotor directly into the training process significantly improves training efficiency and real-world performance, including robustness and flexibility.

The research by Professors Zou, Lin, and their colleagues demonstrates the potential of small artificial neural network models to handle complex navigation tasks, suggesting that these models may be more effective than commonly assumed and also providing insights into how large models work. The lightweight model trained in simulation performed well, challenging the common assumption that “more data is always better” and indicating that structural alignment and embedded physical priors may be more important than sheer data volume.

The findings show that neural networks based on basic physical principles can outperform networks trained on large amounts of labeled data, and that low-resolution depth images can still accurately guide robotic behavior.

In the future, this method can be applied to more types of aircraft and tested in specific real-world scenarios, helping to expand the capabilities of ultra-lightweight drones for tasks such as automatic selfies, drone racing, sports event broadcasting, search and rescue operations, and inspections in cluttered warehouses. Currently, the research team is exploring the use of optical flow instead of depth maps to achieve fully autonomous flight, as well as pursuing interpretability of end-to-end learning systems, hoping to further elucidate the internal representations of the network and provide insights into how insects process environments and plan actions.