Researchers from the Nuclear Engineering and Radiological Sciences Department (NERS) at the University of Michigan, in collaboration with Idaho National Laboratory, conducted a study on nuclear microreactors and machine learning (ML). The abstract has been published, and the full paper, titled “Deep Reinforcement Learning-Based Transient and Load-Following Control for Nuclear Microreactors,” appeared in the July issue of Energy Conversion and Management: X.

The study is based on HolosGen's Holos-Quad microreactor design and investigates a new machine learning approach—multi-agent reinforcement learning (RL)—to model adjustments in nuclear microreactor power output in response to grid demands. The researchers state that this method trains more efficiently and in less time than previous approaches, enabling faster reactor modeling.

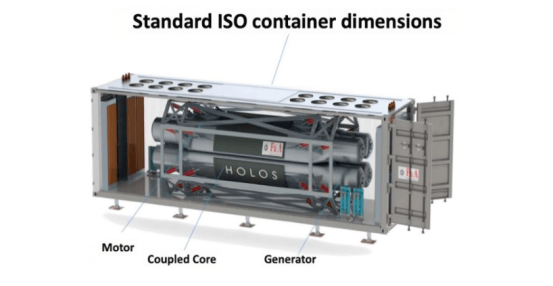

Holos-Quad is a high-temperature gas-cooled microreactor designed for scalable, self-contained power generation. Its structure is inspired by closed-loop turbojet engines, replacing the traditional combustion chamber with sealed nuclear fuel capsules that integrate fuel, moderator, heat exchange, and energy conversion within a single pressure vessel. The compact design fits into a standard 40-foot ISO container.

The research team focused on simulating load-following, i.e., increasing or decreasing power output based on grid demand. The Holos-Quad system controls power by adjusting the positions of eight control drums surrounding the reactor core. One side of each control drum is lined with neutron-absorbing material: rotating inward absorbs neutrons to reduce power, while rotating outward increases power. In the multi-agent RL approach, the eight control drums are modeled as eight independent agents, each controlling a specific drum while having access to information about the entire core.

The team compared the multi-agent RL model with a single-agent method (one agent controlling all eight drums) and the industry-standard proportional-integral-derivative (PID) method (feedback-based control loop). The results showed that the RL model had advantages.

The researchers noted that their machine learning model requires extensive validation under more complex and realistic conditions before it can be commercially applied in the nuclear power industry. However, the findings “open a more effective pathway for reinforcement learning in autonomous nuclear microreactors.”