en.Wedoany.com Reported - On the evening of April 20th, Moonshot AI released and open-sourced its new flagship model, Kimi K2.6. According to an announcement on Moonshot AI's official WeChat account, the model has achieved comprehensive improvements in general Agent capabilities, code generation, and visual understanding. Its performance in multiple authoritative benchmark tests is superior to or on par with GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro. Kimi K2.6 is now available on kimi.com, the latest version of the Kimi app, the Kimi API, and the Kimi Code programming assistant, accessible to all users.

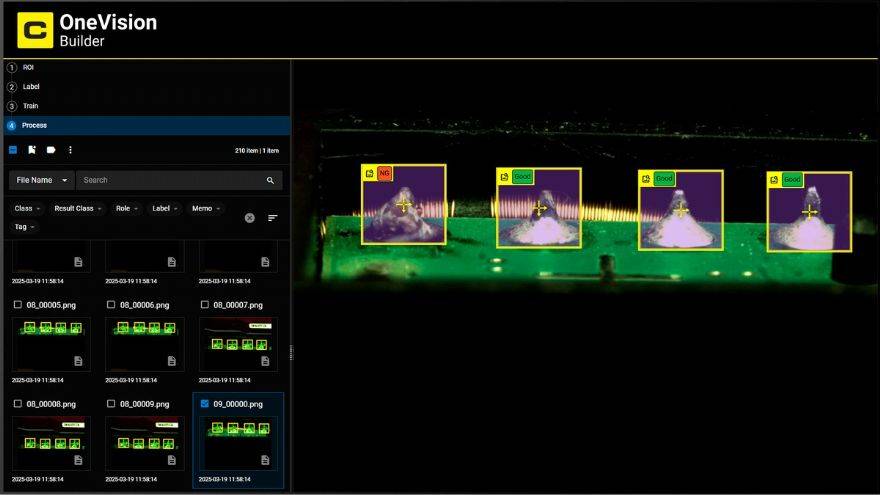

K2.6 is Moonshot AI's strongest code model to date, with significantly enhanced long-context coding capabilities. Data from the internal code evaluation benchmark, Kimi Code Bench, shows that K2.6's score improved by approximately 20% compared to the previous generation K2.5. In the SWE-Bench Pro real-world software engineering capability test, K2.6 scored 58.6 points, higher than GPT-5.4 xhigh's 57.7 points, Gemini 3.1 Pro's 54.2 points, and Claude Opus 4.6's 53.4 points. In the full version of the "Human's Last Exam" with tools test, K2.6 scored 54.0 points, while the three mainstream closed-source models all scored below this. In the Terminal-Bench 2.0 evaluation, K2.6 scored 66.7 points, second only to Gemini 3.1 Pro's 68.5 points. In practical application scenarios, K2.6 can continuously write or modify over 4000 lines of code for 13 hours, and deeply integrates code with visual capabilities to deliver professional-grade web applications.

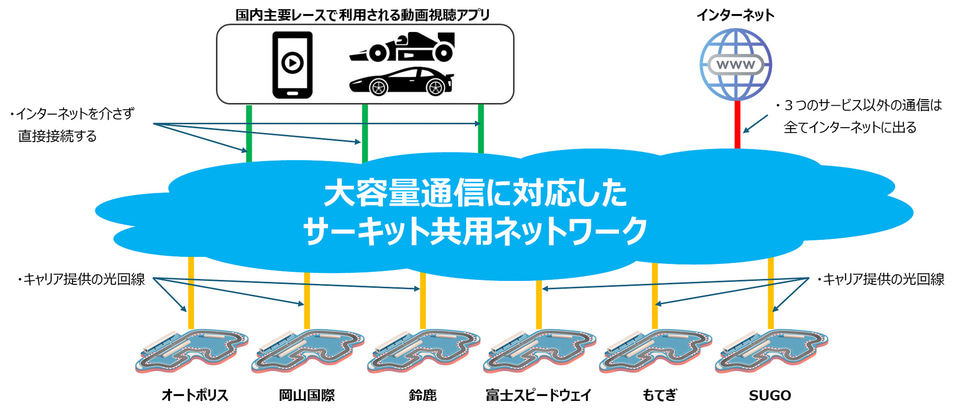

The Agent cluster architecture powered by K2.6 supports up to 300 sub-Agents running in parallel, executing approximately 4000 collaborative steps, and completing end-to-end delivery of multiple outputs from documents to web pages, PPTs, and spreadsheets in one go. In the BrowseComp Agent Swarm test targeting Agent orchestration capabilities, K2.6 scored 86.3 points, while GPT-5.4 scored 78.4 points. For high-load workflows and proactive Agent frameworks like OpenClaw and Hermes Agent, K2.6 supports continuous autonomous operation for up to 5 days. Moonshot AI's internal reinforcement learning infrastructure team has already used K2.6 to drive an Agent running autonomously for 5 consecutive days, responsible for monitoring, fault response, and system operations. Internal Claw Bench test results show that K2.6's comprehensive performance improved by 10% compared to K2.5.

K2.6 continues the Mixture-of-Experts (MoE) architecture of K2.5, with a total parameter count of 1 trillion, 32 billion active parameters, containing 384 experts, activating 8 experts per token, a context length of 256K, and native support for image and video input. In a local deployment test on a Mac, K2.6, through inference process optimization using the Zig language, increased throughput from about 15 tokens per second to about 193 tokens per second across over 4000 tool calls and 12 hours of continuous operation, achieving inference efficiency approximately 20% faster than LM Studio. In another test, K2.6 autonomously completed an in-depth refactoring of the 8-year-old open-source financial matching engine exchange-core, involving 13 hours of continuous work, over 1000 tool calls, modifying over 4000 lines of code, and refactoring the core thread topology, resulting in a 185% median throughput improvement.

The Kimi Code programming assistant has launched a membership plan starting at 39 yuan per month. The Kimi Agent mode supports creating and invoking skills, with the system pre-loaded with hundreds of officially recommended skills, including investment research skill packs. The full open-sourcing of K2.6 further solidifies the influence of domestic large models in the open-source community and also provides product strength support for Moonshot AI's previously disclosed plans to evaluate a Hong Kong IPO and a new round of approximately $1 billion in financing.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com