en.Wedoany.com Reported - American tech giant Google is in discussions with California-based chip manufacturer Marvell to jointly develop two new types of inference chips. This collaboration aims to enhance Google's hardware capabilities in the field of artificial intelligence.

According to reports from The Information and FundaAI, the discussions focus on a memory processing unit that will operate in conjunction with Google's Tensor Processing Units, as well as a new type of TPU specifically designed for executing AI models. Sources familiar with the matter revealed that this memory processor will share AI workloads with the TPU based on computational and memory requirements. The two companies aim to complete the designs by 2027, with plans to produce approximately 2 million hardware units. However, the reports note that discussions are still in the early stages, and specific figures may be adjusted.

Although Google has previously procured data center processors from Marvell, such as CXL controller chips, those were standard products, whereas the current negotiations involve custom chips. Currently, Broadcom is the exclusive designer for Google's TPUs, and the two parties announced a long-term agreement earlier this month, with Broadcom continuing to develop Google's processors until 2031. However, reports indicate that Google has been exploring alternatives to Broadcom since 2023, partly due to the high fees charged by the chip manufacturer, which have been increasing alongside growing processor demand.

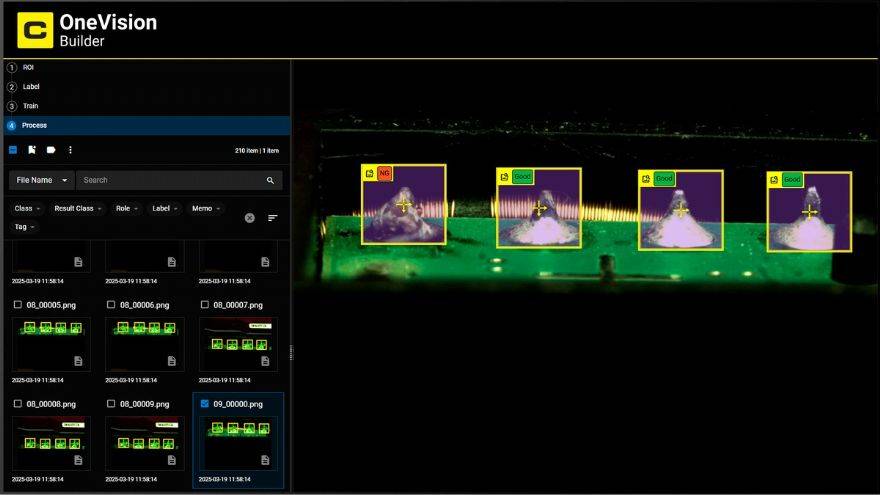

Google's development of new inference chips has accelerated following Nvidia's launch of its Language Processing Unit at the GTC conference last month. Meanwhile, Microsoft unveiled its Maia 200 inference chip earlier this year, and Meta recently showcased the fourth generation of its Meta Training and Inference Accelerator chips, which primarily support generative AI inference tasks. Last week, Meta announced a collaboration with Broadcom to develop multiple generations of MTIA hardware. OpenAI is also working with Broadcom on custom inference chips, although there have been no updates since the deal was announced in October 2025.

Dedicated inference chips are receiving increasing attention due to shifts in AI training workloads and the rise of agent AI tools and workloads that require high computation and low latency, demands that general-purpose processors struggle to meet. The collaboration between Google and Marvell is expected to further advance the development of AI inference chips, strengthening Google's hardware advantages in the competitive landscape.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com