Generative AI models like ChatGPT and Gemini rely on foundation models trained on vast data points to produce answers. Similarly, engineers hope to build foundation models to train robots in new skills, yet collecting and transferring instructional data across robotic systems is fraught with difficulties. Using technologies like virtual reality (VR) for teleoperating hardware training systems is time-consuming and laborious, while internet videos lack step-by-step specialized task demonstrations for specific robots, offering limited guidance.

Recently, the Computer Science and Artificial Intelligence Laboratory (CSAIL) and the Institute for Robotics and Artificial Intelligence at MIT have developed a simulation-driven approach called "PhysicsGen," bringing light to this challenge. The method customizes robot training data, helping robots find the most efficient actions to complete tasks.

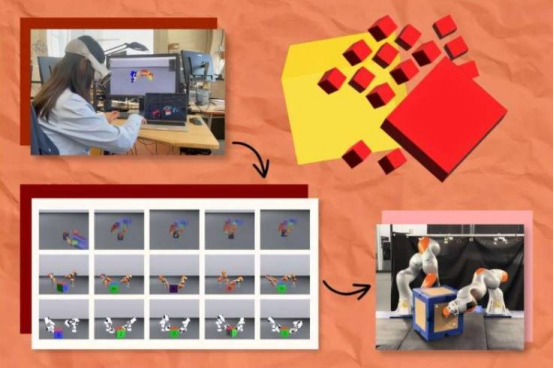

PhysicsGen creates generalizable data for specific robots and conditions through a three-step process. First, a VR headset tracks how human hands manipulate objects (such as blocks), mapping these interactions into a 3D physics simulator, visualizing hand keypoints as small spheres to reflect gestures. Second, these points are remapped onto a 3D model of a specific machine (such as a robotic arm) and moved to the system's precise rotating "joints." Third, PhysicsGen uses trajectory optimization to simulate the most effective motions for completing tasks, allowing the robot to know the best way to perform operations (such as repositioning a box).

Each simulation serves as a detailed training data point, guiding the robot to explore multiple ways of handling objects. With strategies incorporated, the robot can complete tasks in various ways, trying alternative actions if one method fails. Lujie Yang, a PhD student in electrical engineering and computer science at MIT and CSAIL affiliate, stated: "We are creating robot-specific data without needing humans to rerecord specialized demonstrations for each machine, instead scaling data in an autonomous and efficient manner to make task instructions applicable to a wider range of machines."

PhysicsGen holds tremendous potential. It enables engineers to build massive datasets to guide machines like robotic arms and dexterous hands. For example, it can help two robotic arms collaborate to pick items in a warehouse and place them in the correct boxes for shipping, or guide two robots to cooperatively tidy cups in a home. Additionally, it can convert data designed for older robots or different environments into instructions suitable for new machines, making previous datasets more universally applicable.

In testing, PhysicsGen performed excellently. In one virtual experiment, a floating robotic hand needed to rotate a block to a target position. Trained on PhysicsGen's massive dataset, the digital robot executed the task with 81% accuracy, a 60% improvement over benchmarks learning solely from human demonstrations. Researchers also found that PhysicsGen could improve how virtual robotic arms collaboratively manipulate objects, with additional training data created by the system helping pairs of robots successfully complete tasks, outperforming purely human-trained benchmark models by 30%. In experiments with a pair of real robotic arms, when robots deviated from predetermined trajectories or mishandled objects, they could recover tasks by referencing alternative trajectories in the instructional database.

Senior author Russ Tedrake stated that this imitation-guided data generation technology combines the advantages of human demonstrations with the power of robotic motion planning algorithms. In the future, foundation models might provide relevant information, while such data generation techniques will offer post-training solutions for models.

Soon, PhysicsGen may expand to new fields, diversifying the tasks machines can perform. Yang gave an example: to teach a robot trained only on tidying dishes to pour water, PhysicsGen's process can not only generate dynamic actionable motions for familiar tasks but also create a diverse library of physical interactions to help the robot complete entirely new tasks.

However, MIT researchers warn that creating large amounts of broadly applicable training data aids in building robot foundation models, but this remains a long-term goal. Currently, the CSAIL-led team is researching how to use vast unstructured resources like PhysicsGen (such as internet videos) as simulation seeds, transforming everyday visual content into rich data to teach machines tasks not explicitly demonstrated.

Yang and colleagues also plan to better apply PhysicsGen to robots with varying shapes and configurations, using real robot demonstration datasets to capture robotic joint motion patterns. At the same time, they intend to incorporate reinforcement learning to allow PhysicsGen's datasets to surpass human-provided examples and utilize advanced perception technologies to enhance the process, helping robots perceive and interpret surrounding environments, analyzing and adapting to the complexities of the physical world. Currently, PhysicsGen demonstrates AI's role in training different robots to manipulate rigid objects; in the future, it may help robots find optimal ways to handle soft objects (such as fruit) and deformable objects (such as clay), although simulating these interactions is currently challenging.