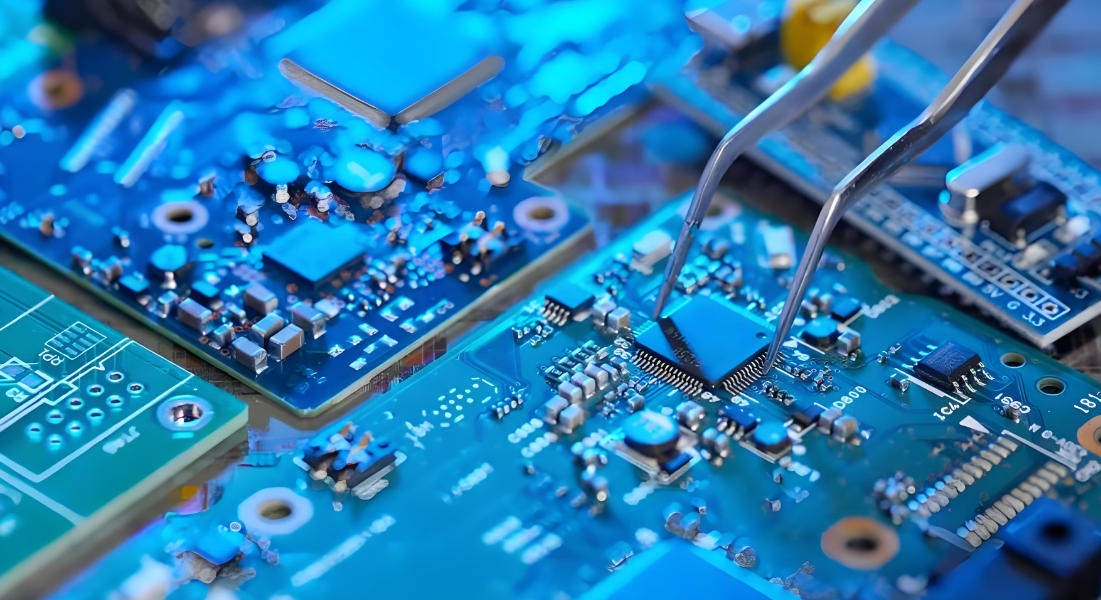

en.Wedoany.com Report on Mar 30th, A recent research report from CITIC Securities points out that enhancing AI training and inference efficiency through a "hyper-node" architecture has become a clear development trend in the industry. This architecture tightly interconnects multiple AI accelerator cards like GPUs via a high-bandwidth, low-latency Scale-up network, supplemented by advanced mechanisms such as memory pooling and direct memory access. It effectively addresses the bottleneck of cross-node communication in large-scale clusters, thereby significantly improving overall computing power utilization and task processing efficiency.

The report provides an in-depth analysis of three key infrastructure segments that will see significant incremental market opportunities under this clear technological wave. The first is the switching chips between GPUs. As the core component for building the high-speed internal network of hyper-nodes, demand for these chips will see pure incremental growth as Scale-up networks become standard. The report particularly notes that the switching chip segment possesses favorable commercial attributes, easily forming a stable oligopoly structure, with Ethernet solutions currently emerging as the primary technical direction. As the localization process of computing chips accelerates, supporting domestic Ethernet switching chip solutions have begun to be implemented, offering vast and clear growth potential. According to CITIC Securities' estimates, the global incremental market space for this segment is expected to reach $100 billion by 2028. Within this, the market space for import substitution is estimated to be $5 billion. Compared to the current revenue scale of approximately RMB 1 billion for leading domestic companies, the future growth potential is considerable.

Secondly, it is the liquid cooling segment. The hyper-node architecture highly integrates high-power computing units, inevitably leading to a sharp increase in the power consumption and heat generation of individual cabinets. Traditional air-cooling solutions can no longer meet the cooling demands. This will strongly drive the rapid increase in the penetration rate of liquid cooling technology in high-performance computing clusters. Simultaneously, more complex and efficient liquid cooling solutions will also drive up their average selling prices. The report estimates that by 2028, the incremental market space driven by hyper-nodes for the liquid cooling segment will be approximately $13 billion.

Finally, it is the in-cabinet power supply segment. Similar to the logic for liquid cooling, the exponential growth in cabinet power density poses unprecedented challenges to the power rating, reliability, and energy efficiency of the power supply system. To support the stable operation of high-power-density cabinets, higher-power, more efficient power modules will become essential. This will directly drive up the average selling price and value of power supply products within cabinets. CITIC Securities estimates the incremental market space for this segment to be about $24 billion by 2028.

In summary, CITIC Securities believes that hyper-nodes are not only a technological path for the evolution of AI computing power infrastructure but will also drive the scale growth and value revaluation of key upstream segments in the industry chain. Among them, the switching chip segment is particularly worthy of investor focus due to its broad import substitution prospects and vast untapped market. Concurrently, advanced optical interconnection technologies like co-packaged optics based on switching chips also present substitution opportunities.