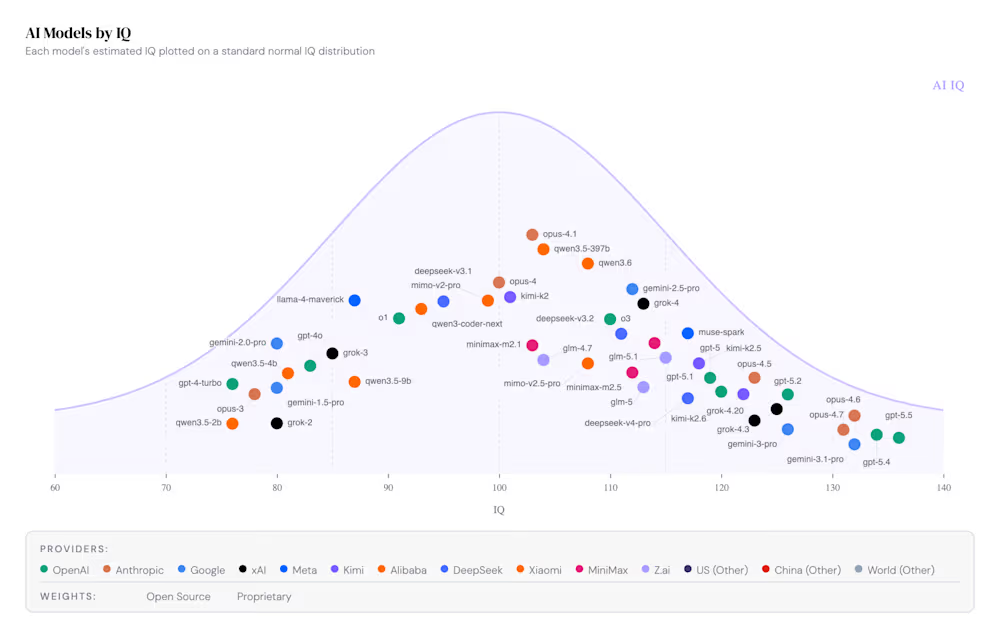

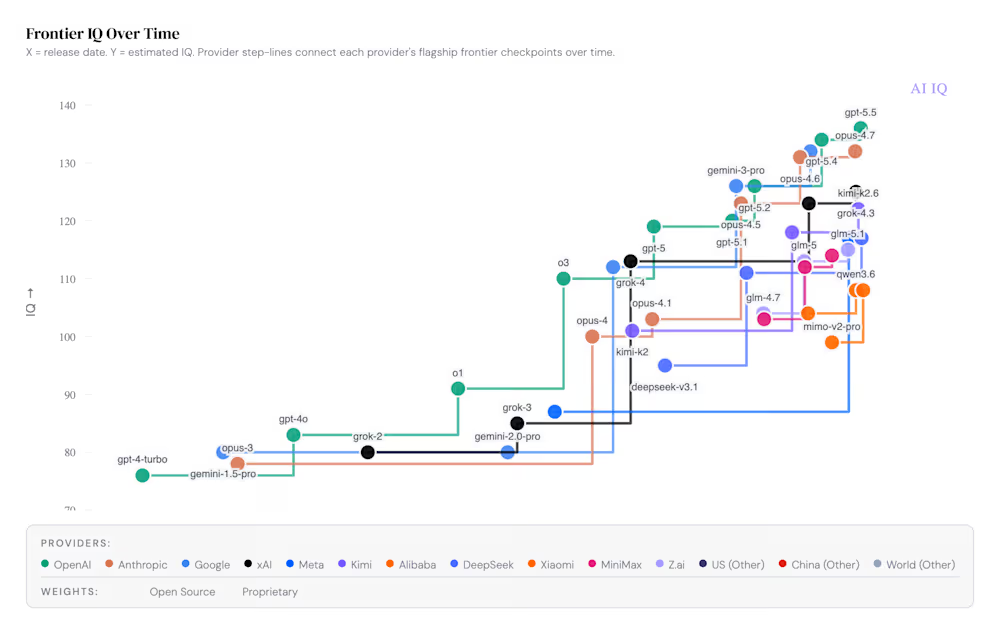

en.Wedoany.com Reported - A startup project called AI IQ has migrated the evaluation framework of traditional IQ tests to the field of artificial intelligence, estimating intelligence quotients and publishing rankings for over 50 mainstream language models globally. According to real-time data released by the project, OpenAI's GPT-5.5 temporarily holds the top spot with an estimated IQ close to 136, but the gap with competitors such as Anthropic's Opus 4.7 (IQ approximately 132) and Google's Gemini 3.1 Pro (IQ approximately 131) has narrowed to its smallest historical margin.

The project was founded and led by Ryan Shea, a Princeton University mechanical engineering graduate and co-founder of the blockchain platform Stacks. Its evaluation methodology is based on a composite formula: 12 industry-recognized benchmark tests are grouped into four reasoning dimensions—abstraction, mathematics, coding, and scholarship—and the simple average of the four dimension scores is taken as the model's composite IQ. The abstraction dimension references the notoriously difficult pattern recognition tests ARC-AGI-1 and ARC-AGI-2; the mathematics dimension includes FrontierMath, AIME, and ProofBench; the coding dimension uses Terminal-Bench 2.0, SWE-Bench Verified, and SciCode; and the scholarship dimension draws from Humanity's Last Exam, CritPt, and GPQA Diamond. Each raw score is mapped to an implied IQ through what the website calls a "manually calibrated difficulty curve," with score ceilings set for benchmarks susceptible to data contamination or of lower difficulty to prevent inflated scores.

The data shows that over 50 cutting-edge large language models are currently available on the market for invocation, supplied by more than 14 vendors spanning the United States, China, and Europe. The performance of models from Chinese manufacturers is concentrated in the mid-range, with products like Kimi K2.6, GLM-5, DeepSeek-V3.2, Qwen3.6, and MiniMax-M2.7 achieving IQ scores concentrated between 112 and 118. This intensely competitive cost-effectiveness tier provides enterprise users with pragmatic choices beyond the absolute top-tier models. On the cost dimension, AI IQ has plotted a scatter diagram of IQ versus effective cost. The data reveals that GPT-5.5 and Opus 4.7 cost over $30 and $50 per task respectively, while models like GPT-5.4-mini, DeepSeek-V3.2, and MiniMax-M2.7 can achieve IQ scores of 112 to 120 while keeping per-task costs between $1 and $5. This divergence in price and performance makes routing architectures—which assign different models based on task difficulty—the dominant paradigm for current enterprise-level AI deployment.

Beyond cognitive ability, the project has also introduced an emotional intelligence assessment, calculating a composite EQ for each model by weighting its EQ-Bench 3 Elo score and Arena Elo score at 50% each. In the IQ versus EQ scatter plot, Anthropic's Opus 4.7 occupies the advantageous upper-right quadrant with an EQ score close to 132, demonstrating a combination of high cognitive and high emotional capabilities; OpenAI's GPT-5.5 and GPT-5.4 lead in intelligence but have slightly lower emotional scores. The website's creators implemented a corrective measure, proactively deducting 200 Elo points from the EQ-Bench component for the Anthropic model series to eliminate potential scoring bias arising from its use of the Anthropic model Claude as a judge.

The evaluation framework has sparked polarized reactions on social media. Some enterprise technical personnel believe it transforms a complex market landscape into intuitive graphics, facilitating understanding of each model's progress and positioning. However, many researchers and commentators warn that compressing the uneven, varied capabilities of language models into a single score may create a dangerous illusion of precision. Critics point out that large models often exhibit so-called "jagged intelligence," performing brilliantly on graduate-level physics problems yet potentially failing on tasks designed for children, and that a composite score may mask such disparities. Other users question the website's calibration curve, citing a lack of fully transparent public details on data transformation. From a broader perspective, AI IQ's data chronicles the process by which frontier model IQs have surged from approximately 75 points in late 2023 to over 135 points currently in just 30 months—a blistering iteration speed that itself constantly challenges the validity of any static evaluation framework.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com