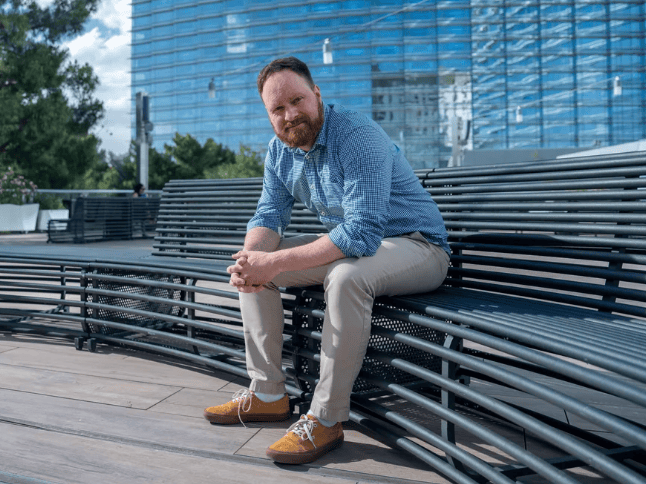

en.Wedoany.com Reported - US artificial intelligence company Runway is accelerating its strategic leap from video generation to physical world construction. The company's co-founder and CEO, Cristóbal Valenzuela, clearly articulated this pivot on the TechCrunch Equity podcast: Runway has moved from content creation tools to fully commit to building general world models, aiming for AI that not only generates pixels but also understands and simulates the physical world behind them. This path points towards gaming, robotics, and even "directions closer to general intelligence," putting it in direct competition with top-tier labs like Google DeepMind.

Runway's transformation is backed by solid capital support. On February 10 this year, Runway announced the completion of a $315 million Series E funding round led by General Atlantic, with participation from Nvidia, AMD, Adobe Ventures, Fidelity, and others, pushing its valuation to $5.3 billion. The core purpose of this funding round is clear—pre-training the next generation of world models and bringing them to new products and industries. By the end of March, Runway further launched the Runway Fund, a $10 million venture capital fund, investing up to $500,000 per deal in pre-seed/seed-stage startups in AI, media, and world simulation.

Rapid advancements in the technology product layer provide substantial content for Runway's world model narrative. In December 2025, Runway released its first general world model family, GWM-1, built on its Gen-4.5 video model. It includes three variants: GWM Worlds, GWM Avatars, and GWM Robotics, utilizing an autoregressive architecture to support real-time interactive control. Valenzuela stated that GWM-1 is the first step in building models that "don't just generate pixels, but understand and simulate the world behind them." In March 2026, the Characters product based on GWM-1 went live, capable of generating zero-shot, conversational AI digital humans from a single image, with APIs open to enterprise developers. On May 3, Runway launched the official version of Gen-4, achieving a substantial breakthrough in cross-scene character consistency for the first time by introducing a "World Consistency" mechanism, ensuring characters maintain visual coherence under different perspectives and lighting conditions.

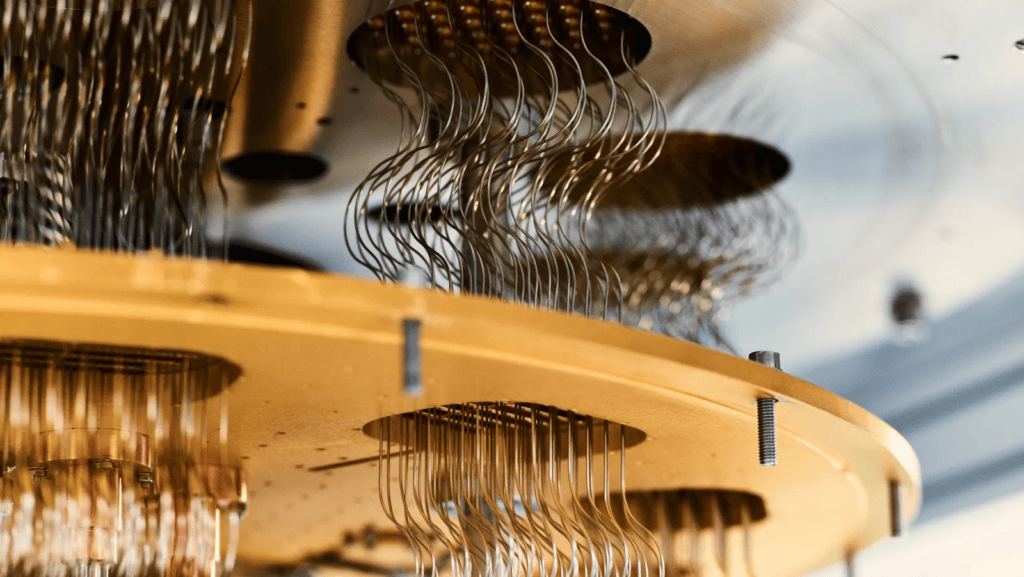

Deep integration with Nvidia provides Runway with unique advantages in computing power and architecture. In January this year, Runway was the first to port Gen-4.5 to the Nvidia Vera Rubin NVL72 platform, completing the migration from the Hopper architecture to the new platform within a single day. Richard Kerris, General Manager of Media and Entertainment at Nvidia, stated that Vera Rubin was designed from the ground up for demanding workloads like video generation and world models. Nvidia and AMD's simultaneous participation in Runway's Series E funding further solidifies the strategic alliance between the parties, spanning from capital to hardware.

In industry competition, Google DeepMind opened Project Genie to external users on January 30, 2026, which is considered one of the most advanced world models currently available. Based on Genie 3, this model can generate 3D virtual worlds in real-time from text or image prompts, allowing users to freely walk and explore, and is available to Google AI Ultra subscribers in the US. Google subsequently integrated Genie 3 with the Waymo autonomous driving system to launch the Waymo World Model, capable of generating highly realistic, interactive simulated driving environments.

The differing paths of Runway and Google are becoming clear. Valenzuela pointed out in the podcast that Runway's approach to world models is fundamentally different from Google and other labs. Google follows a "closed ecosystem + vertical integration" route, using its own computing power and subscription system as barriers; Runway adheres to an "open platform + deep industry cultivation" strategy, gaining computing power advantages through deep ties with Nvidia, accumulating users and data with video creation tools as the front-end entry point, and then penetrating physical industries like robotics and healthcare. GWM-1's physics-aware environments are generated in real-time simulation, targeting long-context workloads such as robot training, explorable virtual worlds, and interactive digital humans—precisely the design goals of the Vera Rubin platform.

Multiple capital streams have surged into the world model track. World Labs, founded by Stanford professor Fei-Fei Li, is potentially valued at around $5 billion; AMI Labs, founded by former Meta Chief AI Scientist Yann LeCun, is valued at approximately $3.5 billion. Runway ranks among the frontrunners with a $5.3 billion valuation, differentiated by its simultaneous control over the generative tool front-end and the model's underlying foundation—the former has already built a paying user base and brand recognition, while the latter ensures a continuous supply of pre-training computing power through its partnership with Nvidia.

Runway founder Valenzuela previously proposed a core judgment in an interview: the real bottleneck in filmmaking has never been technology, but rather the degree of creative explosion that will occur once the technological bottleneck is broken. He believes world models will push media from a "linear" to a "non-linear" era—where users no longer just watch content but enter it and interact with it in real-time. Google DeepMind also positions Project Genie as a "bridge to embodied intelligence," emphasizing its infrastructure value in the safety training of AI agents. Both view world models as a key technology path to transcend the limitations of current language models and move towards more general artificial intelligence. The endgame of this race may determine who first breaks through the next barrier from "simulating the world" to "understanding the world."

On the computing power front, Runway signed an agreement with CoreWeave in February this year to expand its computational capacity. Public data from May 15 shows that Runway added $40 million in annualized recurring revenue in the second quarter of 2026, and its video generation models have surpassed similar products from Google and OpenAI in multiple benchmarks, with Gen-4.5 achieving SOTA results with a 1247 Elo score on the Artificial Analysis benchmark. Building world models requires unprecedented computing density—the Vera Rubin NVL72 can provide 50 PF of inference computing power per single GPU, viewed by Runway as the key infrastructure for unlocking real-time, long-duration, high-fidelity world generation.

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com