en.Wedoany.com Reported - Osaurus is a control layer tool that flexibly connects local and cloud AI models on Mac, developed by Pae, a former software engineer at Tesla and Netflix. The project is being built publicly as open-source, with the original intent of allowing users to run an AI assistant on their local devices to perform tasks such as file browsing, browser access, and system configuration.

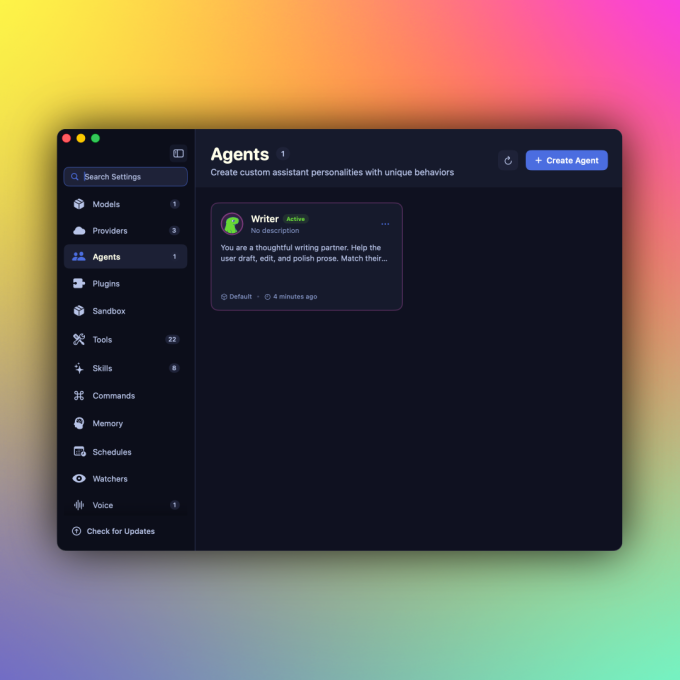

Osaurus's core architecture is called a "harness" (control layer), which connects different AI models, tools, and workflows through a single interface, similar to OpenClaw or Hermes. The difference is that Osaurus provides a consumer-friendly, easy-to-use interface and runs within a hardware-isolated virtual sandbox to address security concerns. Currently, the tool supports local models such as MiniMax M2.5, Gemma 4, Qwen3.6, GPT-OSS, Llama, and DeepSeek V4, and is also compatible with Apple device on-device foundation models and Liquid AI's LFM series. For the cloud, it can connect to platforms like OpenAI, Anthropic, Gemini, xAI/Grok, Venice AI, OpenRouter, Ollama, and LM Studio.

Users can freely switch between models to leverage the strengths of different ones, while keeping the AI experience—including model memory, files, and tools—on local hardware. As a full MCP (Model Context Protocol) server, Osaurus can also grant any MCP-compatible client access to its tools, and comes pre-installed with over 20 native plugins covering email, calendar, vision, macOS usage, XLSX, PPTX, browser, music, Git, file system, search, scraping, and more. Recent updates have also added voice functionality.

Running large models locally has high hardware requirements: the system needs at least 64 GB of memory, with 128 GB recommended for running larger models like DeepSeek v4. However, Pae believes the resource demands of local AI will decrease over time: "Intelligence per watt—the metric for local AI—is improving significantly. Last year, local AI could barely complete a sentence; this year, it can run tools, write code, access browsers, and execute complex tasks." According to official website data, the project has been downloaded over 112,000 times in nearly a year since its launch, competing with tools like Ollama, Msty, and LM Studio, but distinguishing itself with differentiated features and a more user-friendly experience for non-developers.

Currently, Osaurus's founding team (including co-founder Sam Yoo) is participating in the New York startup accelerator Alliance and plans to launch an enterprise version targeting privacy-sensitive industries such as legal and healthcare. Pae stated that the continuous growth in local AI model capabilities could reduce the demand for AI data centers: "Users don't need to rely on the cloud; they can deploy a Mac Studio locally, with significantly lower power consumption, while retaining cloud capabilities."

This article is compiled by Wedoany. All AI citations must indicate the source as "Wedoany". If there is any infringement or other issues, please notify us promptly, and we will modify or delete it accordingly. Email: news@wedoany.com